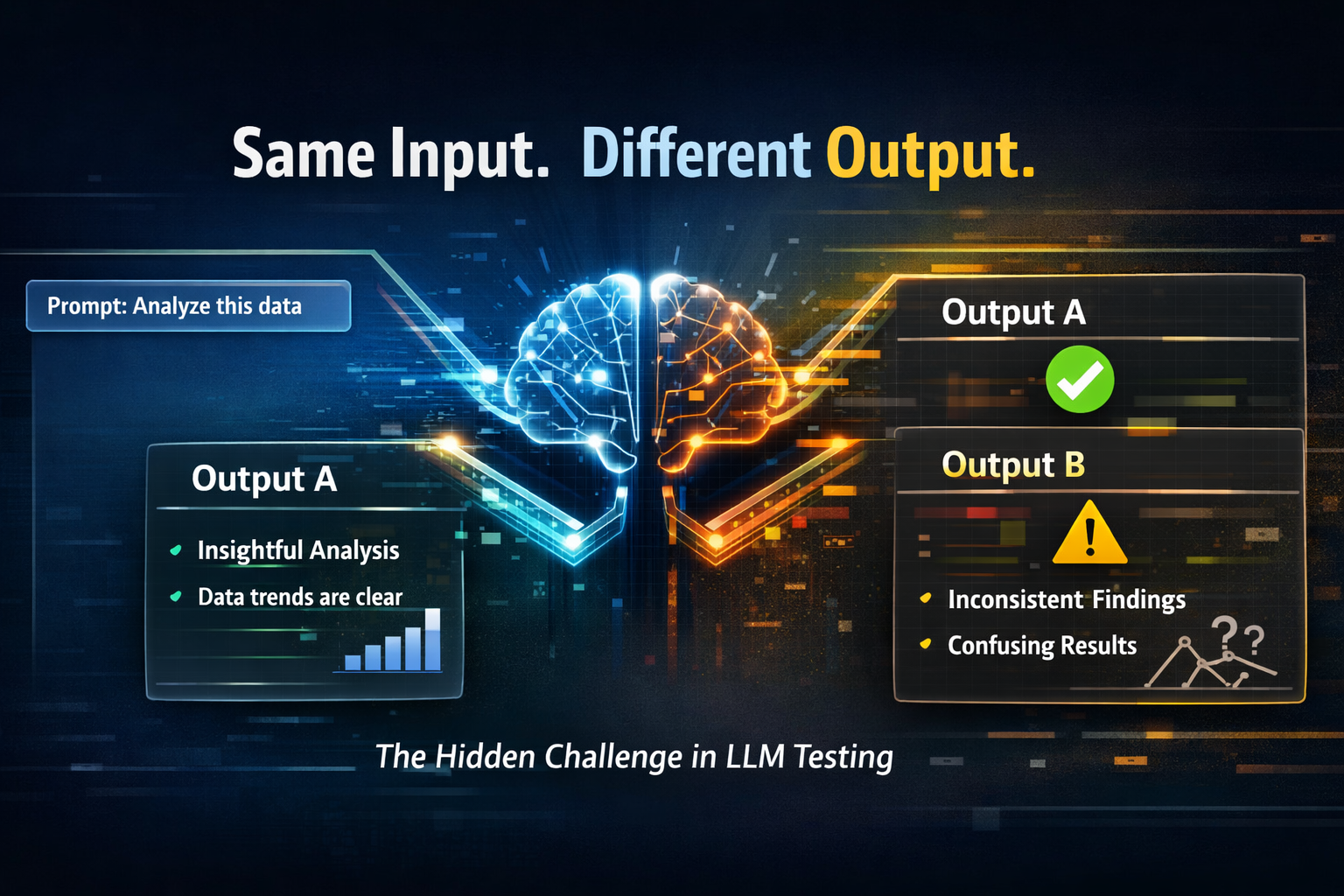

The Reproducibility Problem in LLM Testing: Same Input, Different Output

2026-04-11

Introduction

Ever asked an AI the same question twice and gotten two totally different answers? Frustrating, right? You’re not alone. The Reproducibility Problem in LLM testing is one of the biggest headaches developers, researchers, and businesses face today.

Unlike traditional software, where the same input guarantees the same output, large language models (LLMs) don’t always play by those rules. One minute they’re spot-on, the next—they drift. And when you’re trying to test, debug, or deploy AI systems, that inconsistency can feel like chasing shadows.

So, what’s really going on here? Why is consistency so hard to achieve? And more importantly—how can you handle it without losing your mind?

Let’s dive in.

What Is the Reproducibility Problem in LLM?

In simple terms, the Reproducibility Problem in LLM refers to the inability to consistently generate the same output for the same input.

In traditional systems:

- Input A → Output B (every single time)

In LLMs:

- Input A → Output B (first run)

- Input A → Output C (second run)

- Input A → Output D (third run)

Yep, same input, different outputs. That’s the core issue.

This unpredictability isn’t always bad—it can make AI feel more human and creative. But when you’re testing systems or building production-grade applications, it becomes a serious challenge.

Why Do LLMs Produce Different Outputs?

Alright, let’s break it down. There’s no single culprit here—it’s more like a mix of factors working together.

1. Sampling and Randomness

LLMs don’t just pick the “best” word—they sample from a probability distribution.

That means:

- Each word has a probability score

- The model picks one based on randomness

Even a tiny bit of randomness can lead to different sentence structures or meanings.

👉 Parameters like:

- Temperature

- Top-k

- Top-p (nucleus sampling)

…directly influence this behavior.

2. Temperature Settings

Temperature controls how “creative” or “deterministic” the model is.

- Low temperature (0–0.2): More predictable, repetitive

- High temperature (0.7–1): More diverse, less consistent

So if your temperature isn’t fixed—or is set too high—you’re basically inviting variability.

3. Non-Deterministic Model Behavior

Even with the same settings, some LLM systems are inherently non-deterministic due to:

- Parallel processing

- Hardware differences (GPU/CPU variations)

- Backend optimizations

In other words, the system itself introduces subtle differences.

4. Model Updates and Versioning

Here’s a sneaky one.

LLM providers often update models behind the scenes. So:

- Same prompt today ≠ same result tomorrow

That’s a nightmare for testing pipelines.

5. Prompt Sensitivity

LLMs are extremely sensitive to input phrasing.

Even:

- A comma

- A word change

- Formatting differences

…can lead to different outputs. Now imagine combining that with randomness—yikes.

Real-World Impact of the Reproducibility Problem

This isn’t just a “technical annoyance”—it has real consequences.

1. Debugging Becomes Painful

If a bug appears once but not again, how do you fix it?

Developers often struggle to:

- Reproduce issues

- Validate fixes

- Ensure stability

2. Testing Pipelines Break Down

Traditional testing relies on consistency. But with LLMs:

- Unit tests fail randomly

- Expected outputs don’t match

- CI/CD pipelines become unreliable

3. Loss of Trust

Users expect reliability. If your AI tool:

- Gives different answers every time

- Produces inconsistent results

…it can quickly erode trust.

4. Compliance and Risk Issues

In industries like:

- Healthcare

- Finance

- Legal

Consistency isn’t optional—it’s critical.

How to Handle the Reproducibility Problem in LLM

Alright, enough doom and gloom—let’s talk solutions.

1. Set Temperature to Zero (or Near Zero)

Want more consistent outputs?

👉 Set:

- Temperature = 0

This reduces randomness significantly, making outputs more predictable.

2. Use Deterministic Settings

Control your sampling parameters:

- Fix top-k and top-p

- Avoid unnecessary randomness

The goal? Minimize variability.

3. Version Your Prompts and Models

Treat prompts like code:

- Store versions

- Track changes

- Document behavior

Also:

- Lock model versions when possible

4. Implement Output Validation

Instead of expecting exact matches:

- Use semantic similarity checks

- Validate intent rather than exact wording

Tools like:

…can help here.

5. Use Golden Datasets

Create a dataset of:

- Inputs

- Expected acceptable outputs

Then:

- Compare results against a range, not a single answer

6. Log Everything

Seriously—log everything.

Track:

- Inputs

- Outputs

- Parameters

- Model versions

This makes debugging way easier.

7. Use Multiple Runs and Averaging

Run the same prompt multiple times:

- Analyze patterns

- Identify consistent outputs

This helps reduce outliers.

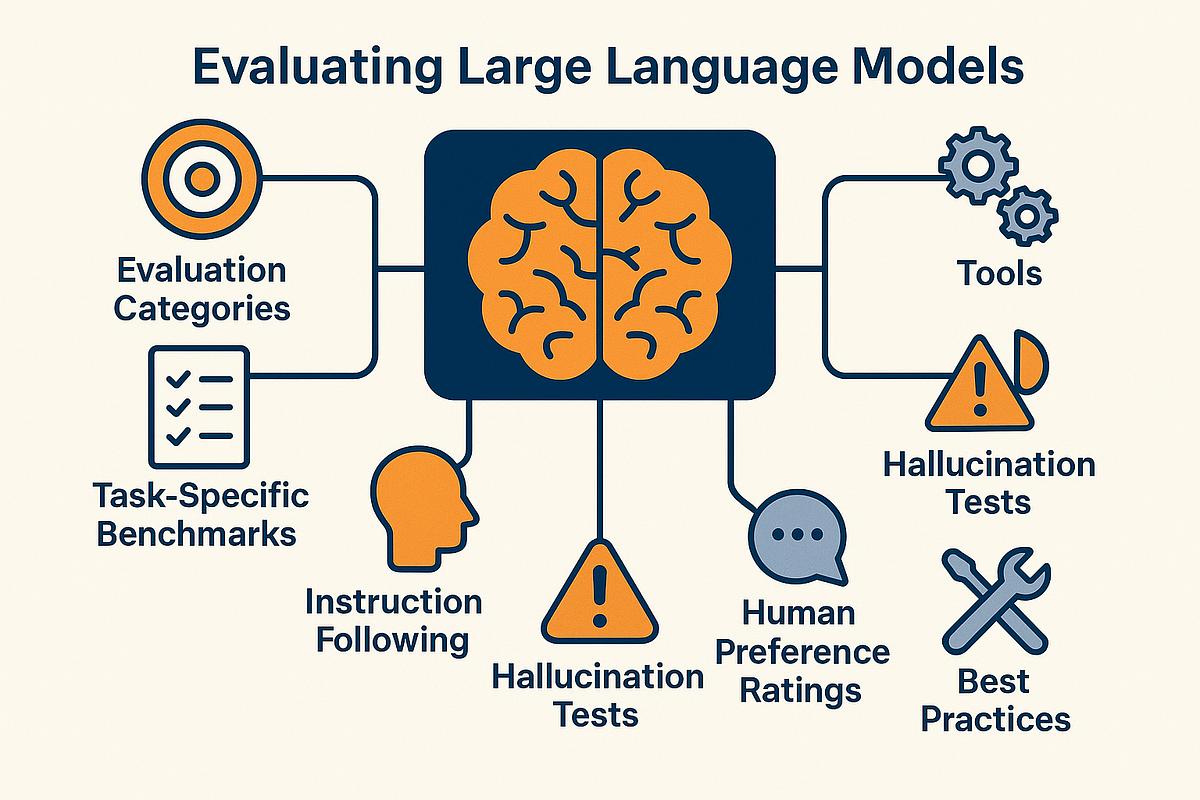

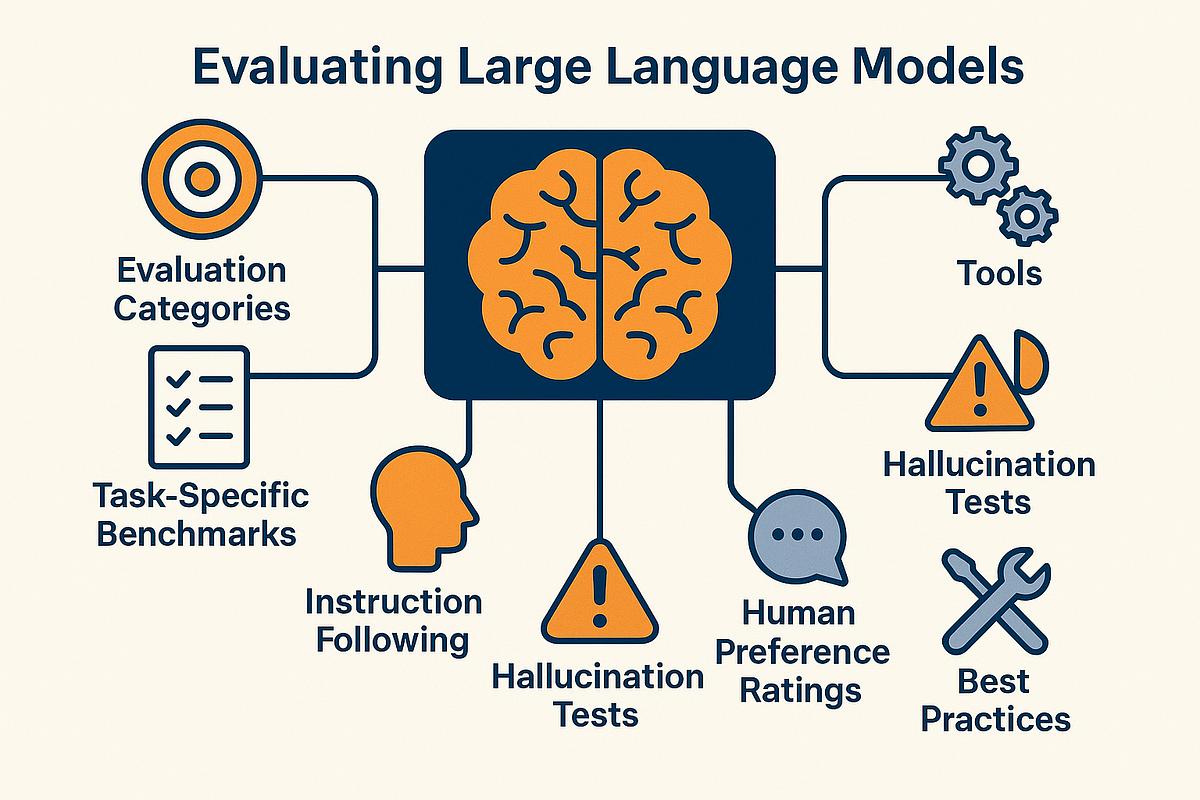

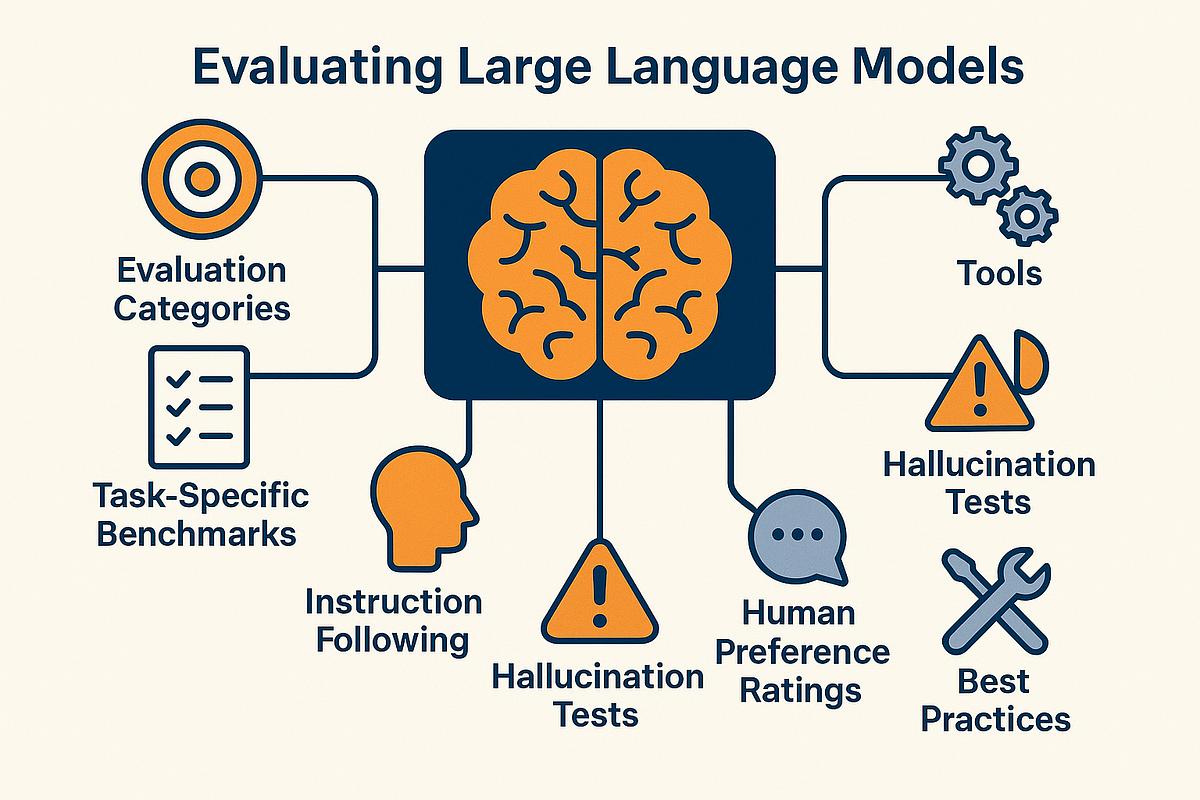

8. Build Robust Evaluation Metrics

Instead of exact matching, use:

- BLEU / ROUGE (with caution)

- Embedding similarity

- Human evaluation

How Trusys Solves the Reproducibility Problem in LLM

Trusys addresses the Reproducibility Problem in LLM by bringing structure and consistency to an otherwise unpredictable process. Instead of attempting to eliminate variability entirely, Trusys focuses on controlling and managing it through standardized execution environments, fixed model parameters, and robust prompt versioning. This ensures that every test runs under consistent conditions, making outputs more reliable and easier to analyze. Additionally, Trusys enables deterministic testing workflows and evaluates responses using semantic similarity rather than exact matches, allowing for more practical and meaningful validation. With comprehensive logging, multi-run analysis, and seamless integration into existing development pipelines, Trusys empowers teams to improve reproducibility, streamline debugging, and build more dependable AI systems at scale.

Best Practices for Developers

Let’s keep it practical:

- Don’t expect perfect reproducibility—it’s not realistic

- Design systems that tolerate variation

- Focus on output quality, not exact wording

- Build fallback mechanisms

Think of LLMs less like calculators and more like collaborators.

FAQs

1. Why is reproducibility important in LLM testing?

Because it ensures reliability, helps debugging, and builds user trust.

2. Can LLM outputs ever be fully deterministic?

Not completely—but you can get very close by controlling parameters like temperature and sampling.

3. Does temperature = 0 guarantee the same output?

Not always. It reduces randomness significantly, but system-level factors can still cause variation.

4. How do companies handle this issue in production?

They use logging, versioning, evaluation metrics, and controlled environments to manage variability.

5. Is the reproducibility problem a flaw or a feature?

Honestly, it’s both. It enables creativity but complicates testing.

Wrapping It All Up

The Reproducibility Problem in LLM testing isn’t going away anytime soon. It’s baked into how these models work. But here’s the thing—you don’t have to fight it blindly.

By understanding why it happens and applying the right strategies—like controlling randomness, versioning your systems, and rethinking evaluation—you can turn a frustrating problem into a manageable one.

At the end of the day, LLMs aren’t broken—they’re just different. And once you adjust your mindset and tools, you’ll be in a much better position to build reliable, scalable AI systems.

Stop guessing.

Start measuring.

Join teams building reliable AI with TruEval. Start with a free trial, no credit card required. Get your first evaluation running in under 10 minutes.

Questions about Trusys?

Our team is here to help. Schedule a personalized demo to see how Trusys fits your specific use case.

Book a Demo

Ready to dive in?

Check out our documentation and tutorials. Get started with example datasets and evaluation templates.

Start Free Trial

Free Trial

No credit card required

10 Min

To first evaluation

24/7

Enterprise support

Benefits

Specifications

How-to

Contact Us

Learn More

The Reproducibility Problem in LLM Testing: Same Input, Different Output

2026-04-11

Introduction

Ever asked an AI the same question twice and gotten two totally different answers? Frustrating, right? You’re not alone. The Reproducibility Problem in LLM testing is one of the biggest headaches developers, researchers, and businesses face today.

Unlike traditional software, where the same input guarantees the same output, large language models (LLMs) don’t always play by those rules. One minute they’re spot-on, the next—they drift. And when you’re trying to test, debug, or deploy AI systems, that inconsistency can feel like chasing shadows.

So, what’s really going on here? Why is consistency so hard to achieve? And more importantly—how can you handle it without losing your mind?

Let’s dive in.

What Is the Reproducibility Problem in LLM?

In simple terms, the Reproducibility Problem in LLM refers to the inability to consistently generate the same output for the same input.

In traditional systems:

- Input A → Output B (every single time)

In LLMs:

- Input A → Output B (first run)

- Input A → Output C (second run)

- Input A → Output D (third run)

Yep, same input, different outputs. That’s the core issue.

This unpredictability isn’t always bad—it can make AI feel more human and creative. But when you’re testing systems or building production-grade applications, it becomes a serious challenge.

Why Do LLMs Produce Different Outputs?

Alright, let’s break it down. There’s no single culprit here—it’s more like a mix of factors working together.

1. Sampling and Randomness

LLMs don’t just pick the “best” word—they sample from a probability distribution.

That means:

- Each word has a probability score

- The model picks one based on randomness

Even a tiny bit of randomness can lead to different sentence structures or meanings.

👉 Parameters like:

- Temperature

- Top-k

- Top-p (nucleus sampling)

…directly influence this behavior.

2. Temperature Settings

Temperature controls how “creative” or “deterministic” the model is.

- Low temperature (0–0.2): More predictable, repetitive

- High temperature (0.7–1): More diverse, less consistent

So if your temperature isn’t fixed—or is set too high—you’re basically inviting variability.

3. Non-Deterministic Model Behavior

Even with the same settings, some LLM systems are inherently non-deterministic due to:

- Parallel processing

- Hardware differences (GPU/CPU variations)

- Backend optimizations

In other words, the system itself introduces subtle differences.

4. Model Updates and Versioning

Here’s a sneaky one.

LLM providers often update models behind the scenes. So:

- Same prompt today ≠ same result tomorrow

That’s a nightmare for testing pipelines.

5. Prompt Sensitivity

LLMs are extremely sensitive to input phrasing.

Even:

- A comma

- A word change

- Formatting differences

…can lead to different outputs. Now imagine combining that with randomness—yikes.

Real-World Impact of the Reproducibility Problem

This isn’t just a “technical annoyance”—it has real consequences.

1. Debugging Becomes Painful

If a bug appears once but not again, how do you fix it?

Developers often struggle to:

- Reproduce issues

- Validate fixes

- Ensure stability

2. Testing Pipelines Break Down

Traditional testing relies on consistency. But with LLMs:

- Unit tests fail randomly

- Expected outputs don’t match

- CI/CD pipelines become unreliable

3. Loss of Trust

Users expect reliability. If your AI tool:

- Gives different answers every time

- Produces inconsistent results

…it can quickly erode trust.

4. Compliance and Risk Issues

In industries like:

- Healthcare

- Finance

- Legal

Consistency isn’t optional—it’s critical.

How to Handle the Reproducibility Problem in LLM

Alright, enough doom and gloom—let’s talk solutions.

1. Set Temperature to Zero (or Near Zero)

Want more consistent outputs?

👉 Set:

- Temperature = 0

This reduces randomness significantly, making outputs more predictable.

2. Use Deterministic Settings

Control your sampling parameters:

- Fix top-k and top-p

- Avoid unnecessary randomness

The goal? Minimize variability.

3. Version Your Prompts and Models

Treat prompts like code:

- Store versions

- Track changes

- Document behavior

Also:

- Lock model versions when possible

4. Implement Output Validation

Instead of expecting exact matches:

- Use semantic similarity checks

- Validate intent rather than exact wording

Tools like:

…can help here.

5. Use Golden Datasets

Create a dataset of:

- Inputs

- Expected acceptable outputs

Then:

- Compare results against a range, not a single answer

6. Log Everything

Seriously—log everything.

Track:

- Inputs

- Outputs

- Parameters

- Model versions

This makes debugging way easier.

7. Use Multiple Runs and Averaging

Run the same prompt multiple times:

- Analyze patterns

- Identify consistent outputs

This helps reduce outliers.

8. Build Robust Evaluation Metrics

Instead of exact matching, use:

- BLEU / ROUGE (with caution)

- Embedding similarity

- Human evaluation

How Trusys Solves the Reproducibility Problem in LLM

Trusys addresses the Reproducibility Problem in LLM by bringing structure and consistency to an otherwise unpredictable process. Instead of attempting to eliminate variability entirely, Trusys focuses on controlling and managing it through standardized execution environments, fixed model parameters, and robust prompt versioning. This ensures that every test runs under consistent conditions, making outputs more reliable and easier to analyze. Additionally, Trusys enables deterministic testing workflows and evaluates responses using semantic similarity rather than exact matches, allowing for more practical and meaningful validation. With comprehensive logging, multi-run analysis, and seamless integration into existing development pipelines, Trusys empowers teams to improve reproducibility, streamline debugging, and build more dependable AI systems at scale.

Best Practices for Developers

Let’s keep it practical:

- Don’t expect perfect reproducibility—it’s not realistic

- Design systems that tolerate variation

- Focus on output quality, not exact wording

- Build fallback mechanisms

Think of LLMs less like calculators and more like collaborators.

FAQs

1. Why is reproducibility important in LLM testing?

Because it ensures reliability, helps debugging, and builds user trust.

2. Can LLM outputs ever be fully deterministic?

Not completely—but you can get very close by controlling parameters like temperature and sampling.

3. Does temperature = 0 guarantee the same output?

Not always. It reduces randomness significantly, but system-level factors can still cause variation.

4. How do companies handle this issue in production?

They use logging, versioning, evaluation metrics, and controlled environments to manage variability.

5. Is the reproducibility problem a flaw or a feature?

Honestly, it’s both. It enables creativity but complicates testing.

Wrapping It All Up

The Reproducibility Problem in LLM testing isn’t going away anytime soon. It’s baked into how these models work. But here’s the thing—you don’t have to fight it blindly.

By understanding why it happens and applying the right strategies—like controlling randomness, versioning your systems, and rethinking evaluation—you can turn a frustrating problem into a manageable one.

At the end of the day, LLMs aren’t broken—they’re just different. And once you adjust your mindset and tools, you’ll be in a much better position to build reliable, scalable AI systems.

Stop guessing.

Start measuring.

Join teams building reliable AI with TruEval. Start with a free trial, no credit card required. Get your first evaluation running in under 10 minutes.

Questions about Trusys?

Our team is here to help. Schedule a personalized demo to see how Trusys fits your specific use case.

Book a Demo

Ready to dive in?

Check out our documentation and tutorials. Get started with example datasets and evaluation templates.

Start Free Trial

Free Trial

No credit card required

10 Min

To first evaluation

24/7

Enterprise support

The Reproducibility Problem in LLM Testing: Same Input, Different Output

2026-04-11

Introduction

Ever asked an AI the same question twice and gotten two totally different answers? Frustrating, right? You’re not alone. The Reproducibility Problem in LLM testing is one of the biggest headaches developers, researchers, and businesses face today.

Unlike traditional software, where the same input guarantees the same output, large language models (LLMs) don’t always play by those rules. One minute they’re spot-on, the next—they drift. And when you’re trying to test, debug, or deploy AI systems, that inconsistency can feel like chasing shadows.

So, what’s really going on here? Why is consistency so hard to achieve? And more importantly—how can you handle it without losing your mind?

Let’s dive in.

What Is the Reproducibility Problem in LLM?

In simple terms, the Reproducibility Problem in LLM refers to the inability to consistently generate the same output for the same input.

In traditional systems:

- Input A → Output B (every single time)

In LLMs:

- Input A → Output B (first run)

- Input A → Output C (second run)

- Input A → Output D (third run)

Yep, same input, different outputs. That’s the core issue.

This unpredictability isn’t always bad—it can make AI feel more human and creative. But when you’re testing systems or building production-grade applications, it becomes a serious challenge.

Why Do LLMs Produce Different Outputs?

Alright, let’s break it down. There’s no single culprit here—it’s more like a mix of factors working together.

1. Sampling and Randomness

LLMs don’t just pick the “best” word—they sample from a probability distribution.

That means:

- Each word has a probability score

- The model picks one based on randomness

Even a tiny bit of randomness can lead to different sentence structures or meanings.

👉 Parameters like:

- Temperature

- Top-k

- Top-p (nucleus sampling)

…directly influence this behavior.

2. Temperature Settings

Temperature controls how “creative” or “deterministic” the model is.

- Low temperature (0–0.2): More predictable, repetitive

- High temperature (0.7–1): More diverse, less consistent

So if your temperature isn’t fixed—or is set too high—you’re basically inviting variability.

3. Non-Deterministic Model Behavior

Even with the same settings, some LLM systems are inherently non-deterministic due to:

- Parallel processing

- Hardware differences (GPU/CPU variations)

- Backend optimizations

In other words, the system itself introduces subtle differences.

4. Model Updates and Versioning

Here’s a sneaky one.

LLM providers often update models behind the scenes. So:

- Same prompt today ≠ same result tomorrow

That’s a nightmare for testing pipelines.

5. Prompt Sensitivity

LLMs are extremely sensitive to input phrasing.

Even:

- A comma

- A word change

- Formatting differences

…can lead to different outputs. Now imagine combining that with randomness—yikes.

Real-World Impact of the Reproducibility Problem

This isn’t just a “technical annoyance”—it has real consequences.

1. Debugging Becomes Painful

If a bug appears once but not again, how do you fix it?

Developers often struggle to:

- Reproduce issues

- Validate fixes

- Ensure stability

2. Testing Pipelines Break Down

Traditional testing relies on consistency. But with LLMs:

- Unit tests fail randomly

- Expected outputs don’t match

- CI/CD pipelines become unreliable

3. Loss of Trust

Users expect reliability. If your AI tool:

- Gives different answers every time

- Produces inconsistent results

…it can quickly erode trust.

4. Compliance and Risk Issues

In industries like:

- Healthcare

- Finance

- Legal

Consistency isn’t optional—it’s critical.

How to Handle the Reproducibility Problem in LLM

Alright, enough doom and gloom—let’s talk solutions.

1. Set Temperature to Zero (or Near Zero)

Want more consistent outputs?

👉 Set:

- Temperature = 0

This reduces randomness significantly, making outputs more predictable.

2. Use Deterministic Settings

Control your sampling parameters:

- Fix top-k and top-p

- Avoid unnecessary randomness

The goal? Minimize variability.

3. Version Your Prompts and Models

Treat prompts like code:

- Store versions

- Track changes

- Document behavior

Also:

- Lock model versions when possible

4. Implement Output Validation

Instead of expecting exact matches:

- Use semantic similarity checks

- Validate intent rather than exact wording

Tools like:

…can help here.

5. Use Golden Datasets

Create a dataset of:

- Inputs

- Expected acceptable outputs

Then:

- Compare results against a range, not a single answer

6. Log Everything

Seriously—log everything.

Track:

- Inputs

- Outputs

- Parameters

- Model versions

This makes debugging way easier.

7. Use Multiple Runs and Averaging

Run the same prompt multiple times:

- Analyze patterns

- Identify consistent outputs

This helps reduce outliers.

8. Build Robust Evaluation Metrics

Instead of exact matching, use:

- BLEU / ROUGE (with caution)

- Embedding similarity

- Human evaluation

How Trusys Solves the Reproducibility Problem in LLM

Trusys addresses the Reproducibility Problem in LLM by bringing structure and consistency to an otherwise unpredictable process. Instead of attempting to eliminate variability entirely, Trusys focuses on controlling and managing it through standardized execution environments, fixed model parameters, and robust prompt versioning. This ensures that every test runs under consistent conditions, making outputs more reliable and easier to analyze. Additionally, Trusys enables deterministic testing workflows and evaluates responses using semantic similarity rather than exact matches, allowing for more practical and meaningful validation. With comprehensive logging, multi-run analysis, and seamless integration into existing development pipelines, Trusys empowers teams to improve reproducibility, streamline debugging, and build more dependable AI systems at scale.

Best Practices for Developers

Let’s keep it practical:

- Don’t expect perfect reproducibility—it’s not realistic

- Design systems that tolerate variation

- Focus on output quality, not exact wording

- Build fallback mechanisms

Think of LLMs less like calculators and more like collaborators.

FAQs

1. Why is reproducibility important in LLM testing?

Because it ensures reliability, helps debugging, and builds user trust.

2. Can LLM outputs ever be fully deterministic?

Not completely—but you can get very close by controlling parameters like temperature and sampling.

3. Does temperature = 0 guarantee the same output?

Not always. It reduces randomness significantly, but system-level factors can still cause variation.

4. How do companies handle this issue in production?

They use logging, versioning, evaluation metrics, and controlled environments to manage variability.

5. Is the reproducibility problem a flaw or a feature?

Honestly, it’s both. It enables creativity but complicates testing.

Wrapping It All Up

The Reproducibility Problem in LLM testing isn’t going away anytime soon. It’s baked into how these models work. But here’s the thing—you don’t have to fight it blindly.

By understanding why it happens and applying the right strategies—like controlling randomness, versioning your systems, and rethinking evaluation—you can turn a frustrating problem into a manageable one.

At the end of the day, LLMs aren’t broken—they’re just different. And once you adjust your mindset and tools, you’ll be in a much better position to build reliable, scalable AI systems.

Stop guessing.

Start measuring.

Join teams building reliable AI with Trusys. Start with a free trial, no credit card required. Get your first evaluation running in under 10 minutes.

Questions about Trusys?

Our team is here to help. Schedule a personalized demo to see how Trusys fits your specific use case.

Book a Demo

Ready to dive in?

Check out our documentation and tutorials. Get started with example datasets and evaluation templates.

Start Free Trial

Free Trial

No credit card required

10 Min

to get started

24/7

Enterprise support