End-to-End AI Lifecycle Governance: Securing AI from Development to Deployment and Beyond

2026-03-20

Introduction

Artificial Intelligence is transforming how enterprises operate—from automating workflows to enabling real-time decision-making. However, as AI systems become more deeply embedded in business-critical processes, they also introduce new layers of risk, complexity, and regulatory pressure.

The biggest mistake organizations make?

Treating AI governance as a single-stage problem—often limited to post-deployment monitoring.

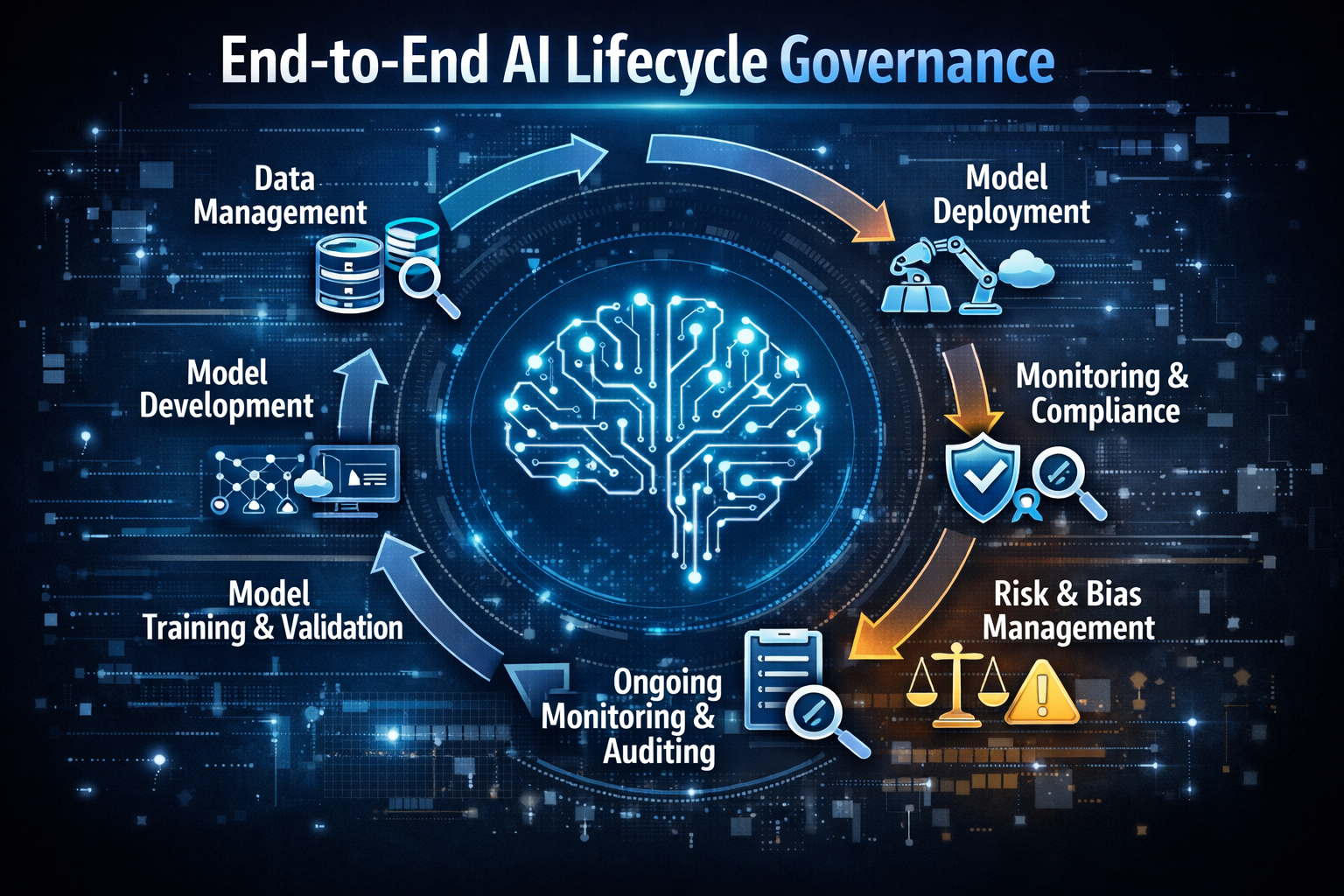

In reality, AI risk is not confined to one phase. It spans the entire lifecycle:

Development → Deployment → Monitoring → Continuous

Improvement

This is where End-to-End AI Lifecycle Governance becomes essential.

By implementing governance across every stage, organizations can ensure:

- Full visibility into AI systems

- Continuous compliance with evolving regulations

- Scalable and secure AI operations

What is AI Lifecycle Governance?

AI lifecycle governance refers to the structured management of AI systems across all stages of their lifecycle, ensuring they remain secure, compliant, transparent, and reliable.

Key Stages of the AI Lifecycle:

- Development

- Model design and training

- Data selection and preprocessing

- Prompt engineering (for LLMs)

- Deployment

- Integration into applications

- API exposure

- User interaction

- Monitoring

- Performance tracking

- Behavior analysis

- Risk detection

- Continuous Improvement

- Model updates

- Feedback loops

- Compliance updates

Without governance across each of these stages, organizations risk building AI systems that are unpredictable, non-compliant, and vulnerable to attacks.

Why AI Governance Must Be End-to-End

1. AI Risks Originate Early

Most AI vulnerabilities are introduced during development:

- Biased training data

- Weak prompt design

- Misconfigured models

If these issues are not caught early, they propagate into production.

2. Deployment Introduces New Attack Surfaces

Once deployed, AI systems interact with:

- External users

- APIs

- Third-party models

This opens the door to:

- Prompt injection attacks

- Data leakage

- Unauthorized access

3. Risks Evolve in Production

AI systems are dynamic. Over time, they can:

- Drift from original behavior

- Become less accurate

- Develop new vulnerabilities

4. Regulations Demand Continuous Compliance

Global regulations require organizations to:

- Maintain transparency

- Ensure accountability

- Continuously assess risk

Governance cannot be static—it must be ongoing.

Key Challenges in AI Lifecycle Governance

🚧 Lack of Visibility

Organizations often lack a unified view of:

- Model behavior

- Data usage

- Risk exposure

🚧 Fragmented Tools

Different teams use different tools for:

- Development

- Security

- Compliance

This creates silos and inefficiencies.

🚧 Rapid AI Adoption

AI is evolving faster than governance frameworks, making it difficult to keep up.

🚧 Complexity of AI Systems

AI systems involve:

- Data pipelines

- Models

- APIs

- Infrastructure

Managing all these components requires a holistic approach.

Core Pillars of End-to-End AI Governance

🔍 1. Visibility

Gain full insight into:

- AI models

- Data flows

- System behavior

🛡️ 2. Security

Protect AI systems from:

- Adversarial attacks

- Data leakage

- Unauthorized access

📜 3. Compliance

Ensure alignment with:

- Global regulations

- Industry standards

- Internal policies

⚖️ 4. Accountability

Track:

- Model decisions

- Data sources

- System changes

🔄 5. Continuous Monitoring

Detect:

- Anomalies

- Drift

- Emerging risks

Governance Across the AI Lifecycle

🧠 Development Stage

Focus: Prevention

Key Practices:

- AI code scanning

- Bias detection

- Secure data pipelines

- Model validation

Risks Addressed:

- Prompt injection vulnerabilities

- Data poisoning

- Misconfigurations

🚀 Deployment Stage

Focus: Secure integration

Key Practices:

- Access controls

- API security

- Guardrails for outputs

Risks Addressed:

- Unauthorized access

- Data exposure

- Unsafe responses

📊 Monitoring Stage

Focus: Continuous assurance

Key Practices:

- Real-time monitoring

- Risk scoring

- Behavior analysis

Risks Addressed:

- Model drift

- Performance degradation

- Emerging threats

🔁 Continuous Improvement

Focus: Adaptation

Key Practices:

- Feedback loops

- Model retraining

- Policy updates

Built on Trusted AI Governance Frameworks

To achieve effective lifecycle governance, organizations must align with globally recognized standards. Trusys AI integrates these frameworks directly into its platform, ensuring governance is built-in, not bolted on.

✅ OWASP Top 10 for LLMs

Focuses on critical AI vulnerabilities like:

- Prompt injection

- Data leakage

- Insecure outputs

✅ NIST AI Risk Management Framework

Provides a structured approach to:

- Risk identification

- Risk mitigation

- Continuous monitoring

✅ MITRE ATLAS

Helps organizations:

- Understand adversarial threats

- Simulate attack scenarios

- Strengthen AI resilience

✅ EU AI Act

Ensures:

- Risk-based classification

- Transparency

- Regulatory compliance

✅ ISO/IEC 42001

Supports:

- AI governance systems

- Risk management frameworks

- Responsible AI practices

Benefits of End-to-End AI Lifecycle Governance

🌐 Full AI Visibility

Understand how your AI systems behave at every stage.

🔄 Continuous Compliance

Stay aligned with evolving regulations without disruption.

📈 Scalable AI Operations

Govern AI systems efficiently as they grow.

🛡️ Reduced Risk Exposure

Identify and mitigate risks early and continuously.

🤝 Increased Trust

Build confidence among users, regulators, and stakeholders.

How Trusys AI Enables Lifecycle Governance

Trusys AI provides a unified platform to manage AI security, governance, and monitoring across the entire lifecycle.

🔍 AI Code Scanning (Development Stage)

- Detect vulnerabilities early

- Identify prompt injection risks

- Secure AI pipelines

👉 Internal Link Suggestion: Link to your blog on AI Code Scanning in Development Workflows

🛡️ AI Security & Risk Detection (Deployment Stage)

- Real-time threat detection

- Secure model integrations

- Output validation

👉 Internal Link Suggestion: Link to Top 5 Production AI Vulnerabilities

📊 AI Monitoring & Observability (Post-Deployment)

- Track model behavior

- Detect anomalies

- Ensure continuous assurance

📜 Governance & Compliance Layer

- Map risks to global frameworks

- Generate audit-ready reports

- Ensure regulatory alignment

Real-World Use Cases

🏦 Banking & Finance

- Fair credit scoring

- Fraud detection governance

- Regulatory compliance

🏥 Healthcare

- Safe AI diagnostics

- Patient data protection

- Explainable AI decisions

💻 Enterprise SaaS

- Secure AI copilots

- Safe chatbot deployment

- Continuous monitoring

Best Practices for Implementing AI Lifecycle Governance

✅ Adopt a Unified Platform

Avoid fragmented tools—use a single platform for security, monitoring, and governance.

✅ Shift Security Left

Integrate AI security early in development.

✅ Automate Governance

Use AI-driven tools to continuously monitor and enforce policies.

✅ Align with Frameworks

Adopt standards like OWASP, NIST, and ISO.

✅ Monitor Continuously

Governance doesn’t stop after deployment.

The Future of AI Governance

AI governance is moving toward:

- Continuous AI assurance

- Automated compliance systems

- AI-native security platforms

Organizations that embrace end-to-end governance will be better positioned to:

- Scale AI safely

- Meet regulatory demands

- Build trustworthy AI systems

Conclusion

AI is powerful—but without governance, it can become a significant risk.

End-to-End AI Lifecycle Governance ensures that AI systems remain secure, compliant, and reliable from development to deployment and beyond.

By adopting a lifecycle approach, organizations can:

- Gain full visibility

- Ensure continuous compliance

- Scale AI with confidence

🚀 Ready to Govern Your AI End-to-End?

Don’t let AI risks slip through the cracks.

Trusys AI empowers enterprises to secure, monitor, and govern AI systems across the entire lifecycle—ensuring safe, scalable, and compliant AI operations.

👉 Book a demo today and take control of your AI governance strategy.

Stop guessing.

Start measuring.

Join teams building reliable AI with TruEval. Start with a free trial, no credit card required. Get your first evaluation running in under 10 minutes.

Questions about Trusys?

Our team is here to help. Schedule a personalized demo to see how Trusys fits your specific use case.

Book a Demo

Ready to dive in?

Check out our documentation and tutorials. Get started with example datasets and evaluation templates.

Start Free Trial

Free Trial

No credit card required

10 Min

To first evaluation

24/7

Enterprise support

Benefits

Specifications

How-to

Contact Us

Learn More

End-to-End AI Lifecycle Governance: Securing AI from Development to Deployment and Beyond

2026-03-20

Introduction

Artificial Intelligence is transforming how enterprises operate—from automating workflows to enabling real-time decision-making. However, as AI systems become more deeply embedded in business-critical processes, they also introduce new layers of risk, complexity, and regulatory pressure.

The biggest mistake organizations make?

Treating AI governance as a single-stage problem—often limited to post-deployment monitoring.

In reality, AI risk is not confined to one phase. It spans the entire lifecycle:

Development → Deployment → Monitoring → Continuous

Improvement

This is where End-to-End AI Lifecycle Governance becomes essential.

By implementing governance across every stage, organizations can ensure:

- Full visibility into AI systems

- Continuous compliance with evolving regulations

- Scalable and secure AI operations

What is AI Lifecycle Governance?

AI lifecycle governance refers to the structured management of AI systems across all stages of their lifecycle, ensuring they remain secure, compliant, transparent, and reliable.

Key Stages of the AI Lifecycle:

- Development

- Model design and training

- Data selection and preprocessing

- Prompt engineering (for LLMs)

- Deployment

- Integration into applications

- API exposure

- User interaction

- Monitoring

- Performance tracking

- Behavior analysis

- Risk detection

- Continuous Improvement

- Model updates

- Feedback loops

- Compliance updates

Without governance across each of these stages, organizations risk building AI systems that are unpredictable, non-compliant, and vulnerable to attacks.

Why AI Governance Must Be End-to-End

1. AI Risks Originate Early

Most AI vulnerabilities are introduced during development:

- Biased training data

- Weak prompt design

- Misconfigured models

If these issues are not caught early, they propagate into production.

2. Deployment Introduces New Attack Surfaces

Once deployed, AI systems interact with:

- External users

- APIs

- Third-party models

This opens the door to:

- Prompt injection attacks

- Data leakage

- Unauthorized access

3. Risks Evolve in Production

AI systems are dynamic. Over time, they can:

- Drift from original behavior

- Become less accurate

- Develop new vulnerabilities

4. Regulations Demand Continuous Compliance

Global regulations require organizations to:

- Maintain transparency

- Ensure accountability

- Continuously assess risk

Governance cannot be static—it must be ongoing.

Key Challenges in AI Lifecycle Governance

🚧 Lack of Visibility

Organizations often lack a unified view of:

- Model behavior

- Data usage

- Risk exposure

🚧 Fragmented Tools

Different teams use different tools for:

- Development

- Security

- Compliance

This creates silos and inefficiencies.

🚧 Rapid AI Adoption

AI is evolving faster than governance frameworks, making it difficult to keep up.

🚧 Complexity of AI Systems

AI systems involve:

- Data pipelines

- Models

- APIs

- Infrastructure

Managing all these components requires a holistic approach.

Core Pillars of End-to-End AI Governance

🔍 1. Visibility

Gain full insight into:

- AI models

- Data flows

- System behavior

🛡️ 2. Security

Protect AI systems from:

- Adversarial attacks

- Data leakage

- Unauthorized access

📜 3. Compliance

Ensure alignment with:

- Global regulations

- Industry standards

- Internal policies

⚖️ 4. Accountability

Track:

- Model decisions

- Data sources

- System changes

🔄 5. Continuous Monitoring

Detect:

- Anomalies

- Drift

- Emerging risks

Governance Across the AI Lifecycle

🧠 Development Stage

Focus: Prevention

Key Practices:

- AI code scanning

- Bias detection

- Secure data pipelines

- Model validation

Risks Addressed:

- Prompt injection vulnerabilities

- Data poisoning

- Misconfigurations

🚀 Deployment Stage

Focus: Secure integration

Key Practices:

- Access controls

- API security

- Guardrails for outputs

Risks Addressed:

- Unauthorized access

- Data exposure

- Unsafe responses

📊 Monitoring Stage

Focus: Continuous assurance

Key Practices:

- Real-time monitoring

- Risk scoring

- Behavior analysis

Risks Addressed:

- Model drift

- Performance degradation

- Emerging threats

🔁 Continuous Improvement

Focus: Adaptation

Key Practices:

- Feedback loops

- Model retraining

- Policy updates

Built on Trusted AI Governance Frameworks

To achieve effective lifecycle governance, organizations must align with globally recognized standards. Trusys AI integrates these frameworks directly into its platform, ensuring governance is built-in, not bolted on.

✅ OWASP Top 10 for LLMs

Focuses on critical AI vulnerabilities like:

- Prompt injection

- Data leakage

- Insecure outputs

✅ NIST AI Risk Management Framework

Provides a structured approach to:

- Risk identification

- Risk mitigation

- Continuous monitoring

✅ MITRE ATLAS

Helps organizations:

- Understand adversarial threats

- Simulate attack scenarios

- Strengthen AI resilience

✅ EU AI Act

Ensures:

- Risk-based classification

- Transparency

- Regulatory compliance

✅ ISO/IEC 42001

Supports:

- AI governance systems

- Risk management frameworks

- Responsible AI practices

Benefits of End-to-End AI Lifecycle Governance

🌐 Full AI Visibility

Understand how your AI systems behave at every stage.

🔄 Continuous Compliance

Stay aligned with evolving regulations without disruption.

📈 Scalable AI Operations

Govern AI systems efficiently as they grow.

🛡️ Reduced Risk Exposure

Identify and mitigate risks early and continuously.

🤝 Increased Trust

Build confidence among users, regulators, and stakeholders.

How Trusys AI Enables Lifecycle Governance

Trusys AI provides a unified platform to manage AI security, governance, and monitoring across the entire lifecycle.

🔍 AI Code Scanning (Development Stage)

- Detect vulnerabilities early

- Identify prompt injection risks

- Secure AI pipelines

👉 Internal Link Suggestion: Link to your blog on AI Code Scanning in Development Workflows

🛡️ AI Security & Risk Detection (Deployment Stage)

- Real-time threat detection

- Secure model integrations

- Output validation

👉 Internal Link Suggestion: Link to Top 5 Production AI Vulnerabilities

📊 AI Monitoring & Observability (Post-Deployment)

- Track model behavior

- Detect anomalies

- Ensure continuous assurance

📜 Governance & Compliance Layer

- Map risks to global frameworks

- Generate audit-ready reports

- Ensure regulatory alignment

Real-World Use Cases

🏦 Banking & Finance

- Fair credit scoring

- Fraud detection governance

- Regulatory compliance

🏥 Healthcare

- Safe AI diagnostics

- Patient data protection

- Explainable AI decisions

💻 Enterprise SaaS

- Secure AI copilots

- Safe chatbot deployment

- Continuous monitoring

Best Practices for Implementing AI Lifecycle Governance

✅ Adopt a Unified Platform

Avoid fragmented tools—use a single platform for security, monitoring, and governance.

✅ Shift Security Left

Integrate AI security early in development.

✅ Automate Governance

Use AI-driven tools to continuously monitor and enforce policies.

✅ Align with Frameworks

Adopt standards like OWASP, NIST, and ISO.

✅ Monitor Continuously

Governance doesn’t stop after deployment.

The Future of AI Governance

AI governance is moving toward:

- Continuous AI assurance

- Automated compliance systems

- AI-native security platforms

Organizations that embrace end-to-end governance will be better positioned to:

- Scale AI safely

- Meet regulatory demands

- Build trustworthy AI systems

Conclusion

AI is powerful—but without governance, it can become a significant risk.

End-to-End AI Lifecycle Governance ensures that AI systems remain secure, compliant, and reliable from development to deployment and beyond.

By adopting a lifecycle approach, organizations can:

- Gain full visibility

- Ensure continuous compliance

- Scale AI with confidence

🚀 Ready to Govern Your AI End-to-End?

Don’t let AI risks slip through the cracks.

Trusys AI empowers enterprises to secure, monitor, and govern AI systems across the entire lifecycle—ensuring safe, scalable, and compliant AI operations.

👉 Book a demo today and take control of your AI governance strategy.

Stop guessing.

Start measuring.

Join teams building reliable AI with TruEval. Start with a free trial, no credit card required. Get your first evaluation running in under 10 minutes.

Questions about Trusys?

Our team is here to help. Schedule a personalized demo to see how Trusys fits your specific use case.

Book a Demo

Ready to dive in?

Check out our documentation and tutorials. Get started with example datasets and evaluation templates.

Start Free Trial

Free Trial

No credit card required

10 Min

To first evaluation

24/7

Enterprise support

End-to-End AI Lifecycle Governance: Securing AI from Development to Deployment and Beyond

2026-03-20

Introduction

Artificial Intelligence is transforming how enterprises operate—from automating workflows to enabling real-time decision-making. However, as AI systems become more deeply embedded in business-critical processes, they also introduce new layers of risk, complexity, and regulatory pressure.

The biggest mistake organizations make?

Treating AI governance as a single-stage problem—often limited to post-deployment monitoring.

In reality, AI risk is not confined to one phase. It spans the entire lifecycle:

Development → Deployment → Monitoring → Continuous

Improvement

This is where End-to-End AI Lifecycle Governance becomes essential.

By implementing governance across every stage, organizations can ensure:

- Full visibility into AI systems

- Continuous compliance with evolving regulations

- Scalable and secure AI operations

What is AI Lifecycle Governance?

AI lifecycle governance refers to the structured management of AI systems across all stages of their lifecycle, ensuring they remain secure, compliant, transparent, and reliable.

Key Stages of the AI Lifecycle:

- Development

- Model design and training

- Data selection and preprocessing

- Prompt engineering (for LLMs)

- Deployment

- Integration into applications

- API exposure

- User interaction

- Monitoring

- Performance tracking

- Behavior analysis

- Risk detection

- Continuous Improvement

- Model updates

- Feedback loops

- Compliance updates

Without governance across each of these stages, organizations risk building AI systems that are unpredictable, non-compliant, and vulnerable to attacks.

Why AI Governance Must Be End-to-End

1. AI Risks Originate Early

Most AI vulnerabilities are introduced during development:

- Biased training data

- Weak prompt design

- Misconfigured models

If these issues are not caught early, they propagate into production.

2. Deployment Introduces New Attack Surfaces

Once deployed, AI systems interact with:

- External users

- APIs

- Third-party models

This opens the door to:

- Prompt injection attacks

- Data leakage

- Unauthorized access

3. Risks Evolve in Production

AI systems are dynamic. Over time, they can:

- Drift from original behavior

- Become less accurate

- Develop new vulnerabilities

4. Regulations Demand Continuous Compliance

Global regulations require organizations to:

- Maintain transparency

- Ensure accountability

- Continuously assess risk

Governance cannot be static—it must be ongoing.

Key Challenges in AI Lifecycle Governance

🚧 Lack of Visibility

Organizations often lack a unified view of:

- Model behavior

- Data usage

- Risk exposure

🚧 Fragmented Tools

Different teams use different tools for:

- Development

- Security

- Compliance

This creates silos and inefficiencies.

🚧 Rapid AI Adoption

AI is evolving faster than governance frameworks, making it difficult to keep up.

🚧 Complexity of AI Systems

AI systems involve:

- Data pipelines

- Models

- APIs

- Infrastructure

Managing all these components requires a holistic approach.

Core Pillars of End-to-End AI Governance

🔍 1. Visibility

Gain full insight into:

- AI models

- Data flows

- System behavior

🛡️ 2. Security

Protect AI systems from:

- Adversarial attacks

- Data leakage

- Unauthorized access

📜 3. Compliance

Ensure alignment with:

- Global regulations

- Industry standards

- Internal policies

⚖️ 4. Accountability

Track:

- Model decisions

- Data sources

- System changes

🔄 5. Continuous Monitoring

Detect:

- Anomalies

- Drift

- Emerging risks

Governance Across the AI Lifecycle

🧠 Development Stage

Focus: Prevention

Key Practices:

- AI code scanning

- Bias detection

- Secure data pipelines

- Model validation

Risks Addressed:

- Prompt injection vulnerabilities

- Data poisoning

- Misconfigurations

🚀 Deployment Stage

Focus: Secure integration

Key Practices:

- Access controls

- API security

- Guardrails for outputs

Risks Addressed:

- Unauthorized access

- Data exposure

- Unsafe responses

📊 Monitoring Stage

Focus: Continuous assurance

Key Practices:

- Real-time monitoring

- Risk scoring

- Behavior analysis

Risks Addressed:

- Model drift

- Performance degradation

- Emerging threats

🔁 Continuous Improvement

Focus: Adaptation

Key Practices:

- Feedback loops

- Model retraining

- Policy updates

Built on Trusted AI Governance Frameworks

To achieve effective lifecycle governance, organizations must align with globally recognized standards. Trusys AI integrates these frameworks directly into its platform, ensuring governance is built-in, not bolted on.

✅ OWASP Top 10 for LLMs

Focuses on critical AI vulnerabilities like:

- Prompt injection

- Data leakage

- Insecure outputs

✅ NIST AI Risk Management Framework

Provides a structured approach to:

- Risk identification

- Risk mitigation

- Continuous monitoring

✅ MITRE ATLAS

Helps organizations:

- Understand adversarial threats

- Simulate attack scenarios

- Strengthen AI resilience

✅ EU AI Act

Ensures:

- Risk-based classification

- Transparency

- Regulatory compliance

✅ ISO/IEC 42001

Supports:

- AI governance systems

- Risk management frameworks

- Responsible AI practices

Benefits of End-to-End AI Lifecycle Governance

🌐 Full AI Visibility

Understand how your AI systems behave at every stage.

🔄 Continuous Compliance

Stay aligned with evolving regulations without disruption.

📈 Scalable AI Operations

Govern AI systems efficiently as they grow.

🛡️ Reduced Risk Exposure

Identify and mitigate risks early and continuously.

🤝 Increased Trust

Build confidence among users, regulators, and stakeholders.

How Trusys AI Enables Lifecycle Governance

Trusys AI provides a unified platform to manage AI security, governance, and monitoring across the entire lifecycle.

🔍 AI Code Scanning (Development Stage)

- Detect vulnerabilities early

- Identify prompt injection risks

- Secure AI pipelines

👉 Internal Link Suggestion: Link to your blog on AI Code Scanning in Development Workflows

🛡️ AI Security & Risk Detection (Deployment Stage)

- Real-time threat detection

- Secure model integrations

- Output validation

👉 Internal Link Suggestion: Link to Top 5 Production AI Vulnerabilities

📊 AI Monitoring & Observability (Post-Deployment)

- Track model behavior

- Detect anomalies

- Ensure continuous assurance

📜 Governance & Compliance Layer

- Map risks to global frameworks

- Generate audit-ready reports

- Ensure regulatory alignment

Real-World Use Cases

🏦 Banking & Finance

- Fair credit scoring

- Fraud detection governance

- Regulatory compliance

🏥 Healthcare

- Safe AI diagnostics

- Patient data protection

- Explainable AI decisions

💻 Enterprise SaaS

- Secure AI copilots

- Safe chatbot deployment

- Continuous monitoring

Best Practices for Implementing AI Lifecycle Governance

✅ Adopt a Unified Platform

Avoid fragmented tools—use a single platform for security, monitoring, and governance.

✅ Shift Security Left

Integrate AI security early in development.

✅ Automate Governance

Use AI-driven tools to continuously monitor and enforce policies.

✅ Align with Frameworks

Adopt standards like OWASP, NIST, and ISO.

✅ Monitor Continuously

Governance doesn’t stop after deployment.

The Future of AI Governance

AI governance is moving toward:

- Continuous AI assurance

- Automated compliance systems

- AI-native security platforms

Organizations that embrace end-to-end governance will be better positioned to:

- Scale AI safely

- Meet regulatory demands

- Build trustworthy AI systems

Conclusion

AI is powerful—but without governance, it can become a significant risk.

End-to-End AI Lifecycle Governance ensures that AI systems remain secure, compliant, and reliable from development to deployment and beyond.

By adopting a lifecycle approach, organizations can:

- Gain full visibility

- Ensure continuous compliance

- Scale AI with confidence

🚀 Ready to Govern Your AI End-to-End?

Don’t let AI risks slip through the cracks.

Trusys AI empowers enterprises to secure, monitor, and govern AI systems across the entire lifecycle—ensuring safe, scalable, and compliant AI operations.

👉 Book a demo today and take control of your AI governance strategy.

Stop guessing.

Start measuring.

Join teams building reliable AI with Trusys. Start with a free trial, no credit card required. Get your first evaluation running in under 10 minutes.

Questions about Trusys?

Our team is here to help. Schedule a personalized demo to see how Trusys fits your specific use case.

Book a Demo

Ready to dive in?

Check out our documentation and tutorials. Get started with example datasets and evaluation templates.

Start Free Trial

Free Trial

No credit card required

10 Min

to get started

24/7

Enterprise support