Test AI Workflow Early: Catching AI Security Risks Before They Scale

2026-04-08

Introduction

Artificial Intelligence is powering the next wave of enterprise innovation—from intelligent automation to real-time decision-making. But as AI adoption accelerates, so do the risks hidden inside development pipelines.

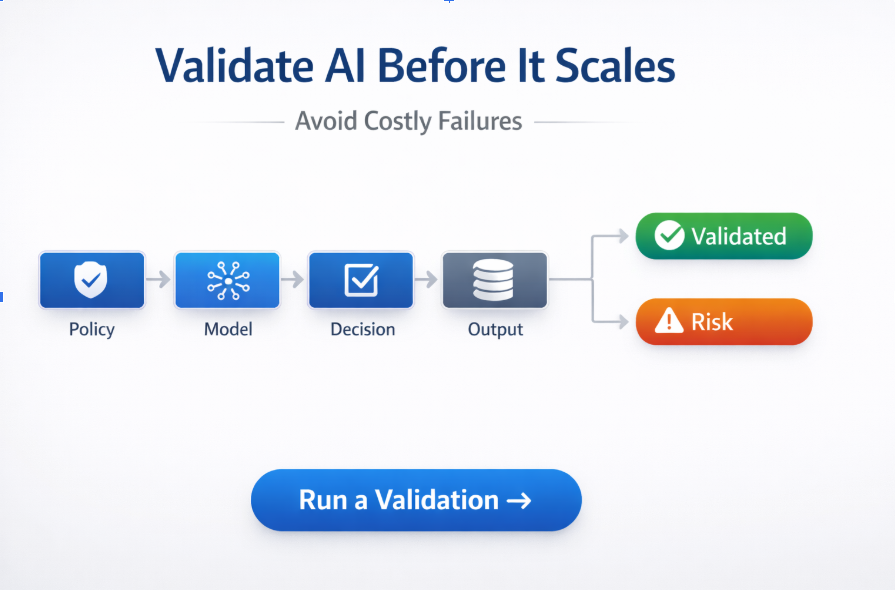

Most organizations still validate AI systems too late—often after deployment. By then, vulnerabilities like prompt injection, data leakage, and model manipulation are already embedded into production workflows.

This is why forward-thinking teams are shifting left and choosing to test AI workflow early—making security a core part of development instead of an afterthought.

At the center of this transformation is AI code scanning, enabling teams to identify and fix risks before they scale.

Why Traditional Security Doesn’t Work for AI Workflows

Traditional security tools were built for deterministic systems. AI systems operate very differently—and that’s where the gap begins.

How AI Systems Are Different

- AI systems rely on data, not just code

- Outputs are non-deterministic and context-driven

- Behavior evolves with inputs and training

- Risk spans prompts, models, and pipelines

Common Risks in AI Workflows

- Prompt injection attacks

- Sensitive data leakage

- Model drift and instability

- Third-party model dependencies

Traditional tools cannot effectively test AI workflow behavior, because they lack visibility into how AI systems think, respond, and evolve.

What It Means to Test AI Workflow

To truly secure AI systems, organizations must go beyond static code checks and start validating the entire AI lifecycle.

Testing AI Workflows Includes:

- Prompt logic and input handling

- Model configurations and guardrails

- Training and fine-tuning datasets

- API integrations and external models

- Output behavior and response patterns

Traditional vs AI Workflow Testing

Traditional Testing

Test AI Workflow

Code-level checks

End-to-end AI validation

Static analysis

Context-aware analysis

Limited scope

Full AI lifecycle coverage

Known vulnerabilities

Dynamic behavior & risk detection

By choosing to test AI workflow continuously, organizations can proactively detect risks before they impact users or business operations.

Top AI Security Risks in Development Workflows

1. Prompt Injection Attacks

Malicious inputs can override system instructions, leading to unsafe or manipulated outputs.

2. Data Leakage

AI models may unintentionally expose:

- Personally identifiable information (PII)

- Sensitive enterprise data

- Training dataset artifacts

3. Model Misconfiguration

Poor setup can result in:

- Weak guardrails

- Uncontrolled outputs

- Expanded attack surfaces

4. Third-Party Dependencies

External models and APIs introduce:

- Supply chain risks

- Limited transparency

- Unknown vulnerabilities

5. Lack of Governance

Without structured validation:

- Compliance risks increase

- Audit readiness declines

How AI Code Scanning Helps Test AI Workflow

AI code scanning plays a critical role in enabling teams to test AI workflow at every stage of development.

Core Capabilities

- Automated security validation during development

- Real-time detection of AI-specific vulnerabilities

- Policy enforcement before deployment

- Continuous monitoring after release

Shift-Left Advantage

- Catch issues earlier in the lifecycle

- Reduce remediation costs

- Accelerate deployment timelines

Instead of one-time testing, organizations can continuously test AI workflow behavior, ensuring systems remain secure as they evolve.

Built on Trusted AI Security Frameworks

Testing AI workflows effectively requires alignment with global standards.

OWASP Top 10 for LLMs

Detects:

- Prompt injection vulnerabilities

- Sensitive data exposure

- Unsafe output handling

NIST AI Risk Management Framework

Supports:

- Risk identification

- Continuous monitoring

- AI governance

MITRE ATLAS

Enables:

- Adversarial attack simulation

- Threat modeling

- Improved resilience

EU AI Act Readiness

Helps:

- Classify AI risk levels

- Ensure transparency

- Prepare for compliance

ISO/IEC 42001

Provides:

- Standardized AI governance

- Risk management frameworks

- Responsible AI practices

Benefits of Testing AI Workflow Early

💰 Reduced Costs

Fix vulnerabilities during development instead of post-deployment.

⚡ Faster Time-to-Market

Avoid last-minute security delays.

📜 Stronger Compliance

Stay aligned with global regulations from day one.

🤝 Increased Trust

Build confidence among users, stakeholders, and regulators.

How Trusys AI Enables You to Test AI Workflow

Trusys AI delivers a unified platform designed to secure AI systems from development to production.

🔍 Automated AI Workflow Testing

- Scan AI pipelines for vulnerabilities

- Detect prompt injection risks

- Analyze model behavior

🛡️ LLM Risk Detection

- Aligned with OWASP standards

- Real-time vulnerability insights

⚔️ Adversarial Testing

- Simulate real-world attacks

- Stress-test AI workflows

📊 Governance & Compliance

- Track risks across lifecycle

- Maintain audit readiness

- Support regulatory frameworks

🌍 Use Cases

- Banking & Finance: Prevent data leaks in AI advisors

- Healthcare: Ensure compliance in diagnostics

- Enterprise SaaS: Secure AI copilots and chatbots

Best Practices to Test AI Workflow Securely

✅ Integrate security early in development

✅ Continuously test AI workflow across pipelines

✅ Use adversarial testing to uncover hidden risks

✅ Secure data pipelines against leakage and poisoning

✅ Monitor AI systems in production

The Future of AI Workflow Testing

As AI adoption grows, testing and security will become inseparable.

What’s Next

- AI-native testing platforms

- Continuous AI assurance models

- Standardized compliance frameworks

Organizations that prioritize the ability to test AI workflow early and continuously will gain a competitive advantage in scalability, trust, and innovation.

Conclusion

AI is transforming industries—but without proactive validation, it also introduces critical risks.

The ability to test AI workflow early is no longer optional—it’s essential.

By embedding security into development workflows, organizations can:

- Build resilient AI systems

- Accelerate innovation

- Ensure compliance and trust

Ready to Test Your AI Workflow Before It Breaks?

Don’t wait for production failures to expose vulnerabilities.

Trusys AI helps you test AI workflow early, detect risks proactively, and deploy AI systems with confidence.

Stop guessing.

Start measuring.

Join teams building reliable AI with TruEval. Start with a free trial, no credit card required. Get your first evaluation running in under 10 minutes.

Questions about Trusys?

Our team is here to help. Schedule a personalized demo to see how Trusys fits your specific use case.

Book a Demo

Ready to dive in?

Check out our documentation and tutorials. Get started with example datasets and evaluation templates.

Start Free Trial

Free Trial

No credit card required

10 Min

To first evaluation

24/7

Enterprise support

Benefits

Specifications

How-to

Contact Us

Learn More

Test AI Workflow Early: Catching AI Security Risks Before They Scale

2026-04-08

Introduction

Artificial Intelligence is powering the next wave of enterprise innovation—from intelligent automation to real-time decision-making. But as AI adoption accelerates, so do the risks hidden inside development pipelines.

Most organizations still validate AI systems too late—often after deployment. By then, vulnerabilities like prompt injection, data leakage, and model manipulation are already embedded into production workflows.

This is why forward-thinking teams are shifting left and choosing to test AI workflow early—making security a core part of development instead of an afterthought.

At the center of this transformation is AI code scanning, enabling teams to identify and fix risks before they scale.

Why Traditional Security Doesn’t Work for AI Workflows

Traditional security tools were built for deterministic systems. AI systems operate very differently—and that’s where the gap begins.

How AI Systems Are Different

- AI systems rely on data, not just code

- Outputs are non-deterministic and context-driven

- Behavior evolves with inputs and training

- Risk spans prompts, models, and pipelines

Common Risks in AI Workflows

- Prompt injection attacks

- Sensitive data leakage

- Model drift and instability

- Third-party model dependencies

Traditional tools cannot effectively test AI workflow behavior, because they lack visibility into how AI systems think, respond, and evolve.

What It Means to Test AI Workflow

To truly secure AI systems, organizations must go beyond static code checks and start validating the entire AI lifecycle.

Testing AI Workflows Includes:

- Prompt logic and input handling

- Model configurations and guardrails

- Training and fine-tuning datasets

- API integrations and external models

- Output behavior and response patterns

Traditional vs AI Workflow Testing

Traditional Testing

Test AI Workflow

Code-level checks

End-to-end AI validation

Static analysis

Context-aware analysis

Limited scope

Full AI lifecycle coverage

Known vulnerabilities

Dynamic behavior & risk detection

By choosing to test AI workflow continuously, organizations can proactively detect risks before they impact users or business operations.

Top AI Security Risks in Development Workflows

1. Prompt Injection Attacks

Malicious inputs can override system instructions, leading to unsafe or manipulated outputs.

2. Data Leakage

AI models may unintentionally expose:

- Personally identifiable information (PII)

- Sensitive enterprise data

- Training dataset artifacts

3. Model Misconfiguration

Poor setup can result in:

- Weak guardrails

- Uncontrolled outputs

- Expanded attack surfaces

4. Third-Party Dependencies

External models and APIs introduce:

- Supply chain risks

- Limited transparency

- Unknown vulnerabilities

5. Lack of Governance

Without structured validation:

- Compliance risks increase

- Audit readiness declines

How AI Code Scanning Helps Test AI Workflow

AI code scanning plays a critical role in enabling teams to test AI workflow at every stage of development.

Core Capabilities

- Automated security validation during development

- Real-time detection of AI-specific vulnerabilities

- Policy enforcement before deployment

- Continuous monitoring after release

Shift-Left Advantage

- Catch issues earlier in the lifecycle

- Reduce remediation costs

- Accelerate deployment timelines

Instead of one-time testing, organizations can continuously test AI workflow behavior, ensuring systems remain secure as they evolve.

Built on Trusted AI Security Frameworks

Testing AI workflows effectively requires alignment with global standards.

OWASP Top 10 for LLMs

Detects:

- Prompt injection vulnerabilities

- Sensitive data exposure

- Unsafe output handling

NIST AI Risk Management Framework

Supports:

- Risk identification

- Continuous monitoring

- AI governance

MITRE ATLAS

Enables:

- Adversarial attack simulation

- Threat modeling

- Improved resilience

EU AI Act Readiness

Helps:

- Classify AI risk levels

- Ensure transparency

- Prepare for compliance

ISO/IEC 42001

Provides:

- Standardized AI governance

- Risk management frameworks

- Responsible AI practices

Benefits of Testing AI Workflow Early

💰 Reduced Costs

Fix vulnerabilities during development instead of post-deployment.

⚡ Faster Time-to-Market

Avoid last-minute security delays.

📜 Stronger Compliance

Stay aligned with global regulations from day one.

🤝 Increased Trust

Build confidence among users, stakeholders, and regulators.

How Trusys AI Enables You to Test AI Workflow

Trusys AI delivers a unified platform designed to secure AI systems from development to production.

🔍 Automated AI Workflow Testing

- Scan AI pipelines for vulnerabilities

- Detect prompt injection risks

- Analyze model behavior

🛡️ LLM Risk Detection

- Aligned with OWASP standards

- Real-time vulnerability insights

⚔️ Adversarial Testing

- Simulate real-world attacks

- Stress-test AI workflows

📊 Governance & Compliance

- Track risks across lifecycle

- Maintain audit readiness

- Support regulatory frameworks

🌍 Use Cases

- Banking & Finance: Prevent data leaks in AI advisors

- Healthcare: Ensure compliance in diagnostics

- Enterprise SaaS: Secure AI copilots and chatbots

Best Practices to Test AI Workflow Securely

✅ Integrate security early in development

✅ Continuously test AI workflow across pipelines

✅ Use adversarial testing to uncover hidden risks

✅ Secure data pipelines against leakage and poisoning

✅ Monitor AI systems in production

The Future of AI Workflow Testing

As AI adoption grows, testing and security will become inseparable.

What’s Next

- AI-native testing platforms

- Continuous AI assurance models

- Standardized compliance frameworks

Organizations that prioritize the ability to test AI workflow early and continuously will gain a competitive advantage in scalability, trust, and innovation.

Conclusion

AI is transforming industries—but without proactive validation, it also introduces critical risks.

The ability to test AI workflow early is no longer optional—it’s essential.

By embedding security into development workflows, organizations can:

- Build resilient AI systems

- Accelerate innovation

- Ensure compliance and trust

Ready to Test Your AI Workflow Before It Breaks?

Don’t wait for production failures to expose vulnerabilities.

Trusys AI helps you test AI workflow early, detect risks proactively, and deploy AI systems with confidence.

Stop guessing.

Start measuring.

Join teams building reliable AI with TruEval. Start with a free trial, no credit card required. Get your first evaluation running in under 10 minutes.

Questions about Trusys?

Our team is here to help. Schedule a personalized demo to see how Trusys fits your specific use case.

Book a Demo

Ready to dive in?

Check out our documentation and tutorials. Get started with example datasets and evaluation templates.

Start Free Trial

Free Trial

No credit card required

10 Min

To first evaluation

24/7

Enterprise support

Test AI Workflow Early: Catching AI Security Risks Before They Scale

2026-04-08

Introduction

Artificial Intelligence is powering the next wave of enterprise innovation—from intelligent automation to real-time decision-making. But as AI adoption accelerates, so do the risks hidden inside development pipelines.

Most organizations still validate AI systems too late—often after deployment. By then, vulnerabilities like prompt injection, data leakage, and model manipulation are already embedded into production workflows.

This is why forward-thinking teams are shifting left and choosing to test AI workflow early—making security a core part of development instead of an afterthought.

At the center of this transformation is AI code scanning, enabling teams to identify and fix risks before they scale.

Why Traditional Security Doesn’t Work for AI Workflows

Traditional security tools were built for deterministic systems. AI systems operate very differently—and that’s where the gap begins.

How AI Systems Are Different

- AI systems rely on data, not just code

- Outputs are non-deterministic and context-driven

- Behavior evolves with inputs and training

- Risk spans prompts, models, and pipelines

Common Risks in AI Workflows

- Prompt injection attacks

- Sensitive data leakage

- Model drift and instability

- Third-party model dependencies

Traditional tools cannot effectively test AI workflow behavior, because they lack visibility into how AI systems think, respond, and evolve.

What It Means to Test AI Workflow

To truly secure AI systems, organizations must go beyond static code checks and start validating the entire AI lifecycle.

Testing AI Workflows Includes:

- Prompt logic and input handling

- Model configurations and guardrails

- Training and fine-tuning datasets

- API integrations and external models

- Output behavior and response patterns

Traditional vs AI Workflow Testing

Traditional Testing

Test AI Workflow

Code-level checks

End-to-end AI validation

Static analysis

Context-aware analysis

Limited scope

Full AI lifecycle coverage

Known vulnerabilities

Dynamic behavior & risk detection

By choosing to test AI workflow continuously, organizations can proactively detect risks before they impact users or business operations.

Top AI Security Risks in Development Workflows

1. Prompt Injection Attacks

Malicious inputs can override system instructions, leading to unsafe or manipulated outputs.

2. Data Leakage

AI models may unintentionally expose:

- Personally identifiable information (PII)

- Sensitive enterprise data

- Training dataset artifacts

3. Model Misconfiguration

Poor setup can result in:

- Weak guardrails

- Uncontrolled outputs

- Expanded attack surfaces

4. Third-Party Dependencies

External models and APIs introduce:

- Supply chain risks

- Limited transparency

- Unknown vulnerabilities

5. Lack of Governance

Without structured validation:

- Compliance risks increase

- Audit readiness declines

How AI Code Scanning Helps Test AI Workflow

AI code scanning plays a critical role in enabling teams to test AI workflow at every stage of development.

Core Capabilities

- Automated security validation during development

- Real-time detection of AI-specific vulnerabilities

- Policy enforcement before deployment

- Continuous monitoring after release

Shift-Left Advantage

- Catch issues earlier in the lifecycle

- Reduce remediation costs

- Accelerate deployment timelines

Instead of one-time testing, organizations can continuously test AI workflow behavior, ensuring systems remain secure as they evolve.

Built on Trusted AI Security Frameworks

Testing AI workflows effectively requires alignment with global standards.

OWASP Top 10 for LLMs

Detects:

- Prompt injection vulnerabilities

- Sensitive data exposure

- Unsafe output handling

NIST AI Risk Management Framework

Supports:

- Risk identification

- Continuous monitoring

- AI governance

MITRE ATLAS

Enables:

- Adversarial attack simulation

- Threat modeling

- Improved resilience

EU AI Act Readiness

Helps:

- Classify AI risk levels

- Ensure transparency

- Prepare for compliance

ISO/IEC 42001

Provides:

- Standardized AI governance

- Risk management frameworks

- Responsible AI practices

Benefits of Testing AI Workflow Early

💰 Reduced Costs

Fix vulnerabilities during development instead of post-deployment.

⚡ Faster Time-to-Market

Avoid last-minute security delays.

📜 Stronger Compliance

Stay aligned with global regulations from day one.

🤝 Increased Trust

Build confidence among users, stakeholders, and regulators.

How Trusys AI Enables You to Test AI Workflow

Trusys AI delivers a unified platform designed to secure AI systems from development to production.

🔍 Automated AI Workflow Testing

- Scan AI pipelines for vulnerabilities

- Detect prompt injection risks

- Analyze model behavior

🛡️ LLM Risk Detection

- Aligned with OWASP standards

- Real-time vulnerability insights

⚔️ Adversarial Testing

- Simulate real-world attacks

- Stress-test AI workflows

📊 Governance & Compliance

- Track risks across lifecycle

- Maintain audit readiness

- Support regulatory frameworks

🌍 Use Cases

- Banking & Finance: Prevent data leaks in AI advisors

- Healthcare: Ensure compliance in diagnostics

- Enterprise SaaS: Secure AI copilots and chatbots

Best Practices to Test AI Workflow Securely

✅ Integrate security early in development

✅ Continuously test AI workflow across pipelines

✅ Use adversarial testing to uncover hidden risks

✅ Secure data pipelines against leakage and poisoning

✅ Monitor AI systems in production

The Future of AI Workflow Testing

As AI adoption grows, testing and security will become inseparable.

What’s Next

- AI-native testing platforms

- Continuous AI assurance models

- Standardized compliance frameworks

Organizations that prioritize the ability to test AI workflow early and continuously will gain a competitive advantage in scalability, trust, and innovation.

Conclusion

AI is transforming industries—but without proactive validation, it also introduces critical risks.

The ability to test AI workflow early is no longer optional—it’s essential.

By embedding security into development workflows, organizations can:

- Build resilient AI systems

- Accelerate innovation

- Ensure compliance and trust

Ready to Test Your AI Workflow Before It Breaks?

Don’t wait for production failures to expose vulnerabilities.

Trusys AI helps you test AI workflow early, detect risks proactively, and deploy AI systems with confidence.

Stop guessing.

Start measuring.

Join teams building reliable AI with Trusys. Start with a free trial, no credit card required. Get your first evaluation running in under 10 minutes.

Questions about Trusys?

Our team is here to help. Schedule a personalized demo to see how Trusys fits your specific use case.

Book a Demo

Ready to dive in?

Check out our documentation and tutorials. Get started with example datasets and evaluation templates.

Start Free Trial

Free Trial

No credit card required

10 Min

to get started

24/7

Enterprise support