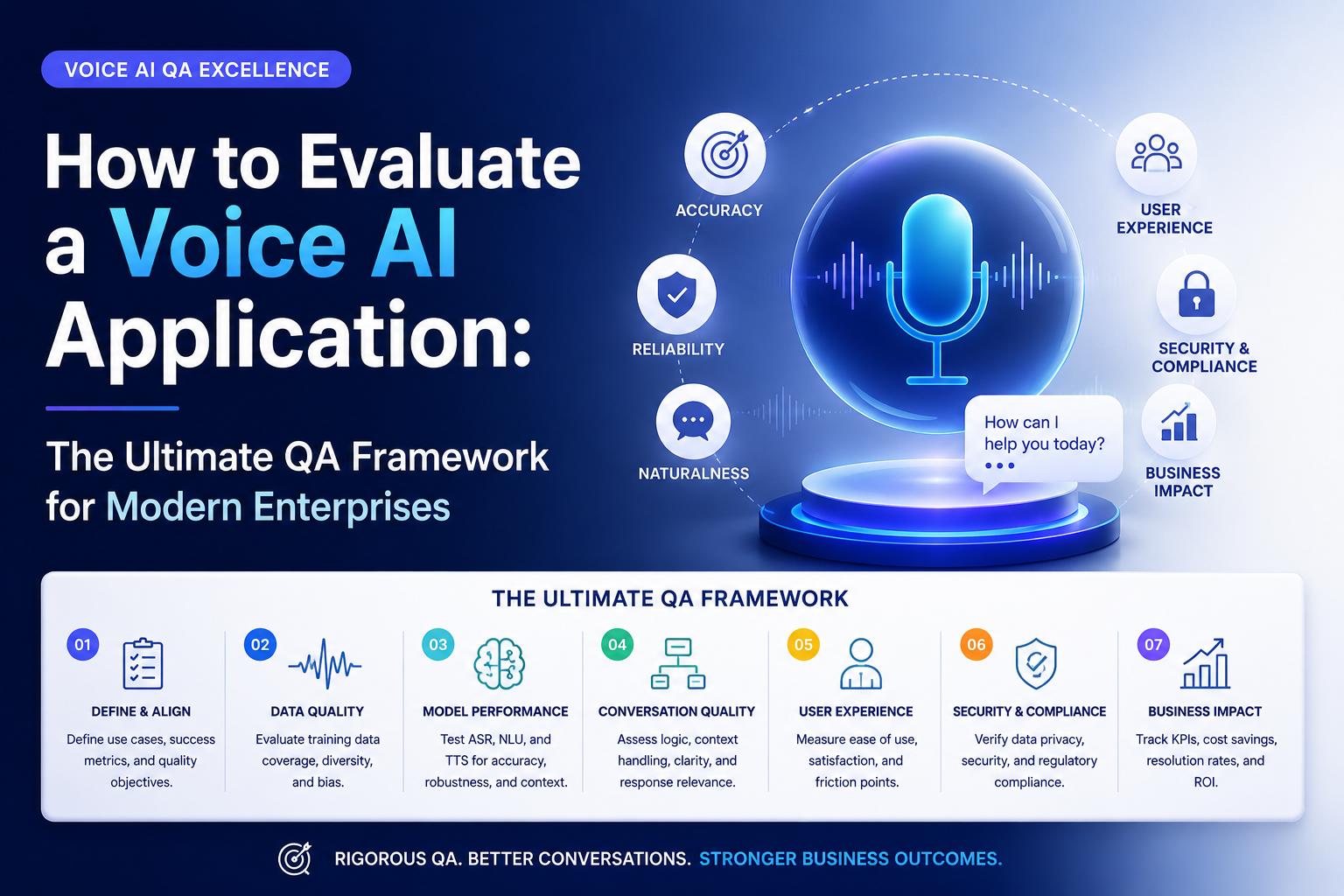

How to Evaluate a Voice AI Application: The Ultimate QA Framework for Modern Enterprises

2026-05-09

How to Evaluate a Voice AI Application: A Framework for QA Teams

Voice AI applications are everywhere these days — from virtual assistants and AI-powered contact centers to healthcare voice bots and smart enterprise systems. Businesses are rapidly adopting conversational AI to improve customer engagement, automate workflows, and deliver faster support.

But here’s the thing: a Voice AI application is only as good as its ability to understand, respond, and adapt to real human conversations.

That’s why QA teams play a critical role in Voice AI success.

Traditional software testing alone isn’t enough anymore. Testing a Voice AI application involves evaluating speech recognition accuracy, natural language understanding, conversational context, scalability, security, and user experience — all at once.

For organizations embracing AI-driven digital transformation, companies like Trusys.ai are helping enterprises implement scalable QA strategies that ensure conversational AI systems remain accurate, reliable, and enterprise-ready.

In this guide, we’ll break down a practical Voice AI testing framework that QA teams can use to evaluate modern conversational AI systems effectively.

Why Voice AI Quality Assurance Matters

Voice AI systems interact directly with users in real time. Even small issues can quickly impact customer trust and business performance.

Imagine this scenario:

- A banking assistant misunderstands an account request

- A healthcare bot fails to recognize medical terminology

- A customer support AI loops endlessly without resolving the issue

Frustrating, right?

That’s why Voice AI Quality Assurance is becoming a top priority for enterprises.

The Growing Adoption of Voice AI

According to Gartner, conversational AI adoption continues to rise across industries such as:

- Banking

- Healthcare

- Retail

- Telecom

- Logistics

- Insurance

Voice interfaces are now central to customer experience strategies.

Risks of Poor Voice AI Performance

Without proper testing, organizations may face:

- High customer frustration

- Increased support costs

- Incorrect responses

- Security vulnerabilities

- Brand reputation damage

- Compliance issues

Why Enterprises Need Scalable QA

Modern Voice AI systems constantly evolve through machine learning updates and new conversational data. QA teams need testing frameworks that support:

- Continuous validation

- Real-time monitoring

- Automated regression testing

- Scalable performance testing

This is where intelligent QA engineering approaches, like those championed by Trusys.ai, become essential.

Understanding the Architecture of Voice AI Applications

Before testing begins, QA teams must understand the core building blocks of Voice AI systems.

Speech-to-Text (STT)

STT converts spoken language into text.

QA teams must evaluate:

- Accent recognition

- Pronunciation handling

- Noise resilience

- Speech accuracy

Natural Language Processing (NLP)

NLP helps the AI understand user intent and context.

Testing focuses on:

- Intent recognition

- Entity extraction

- Context interpretation

- Sentiment understanding

Dialogue Orchestration

This controls the conversation flow.

It determines:

- What response the AI gives

- How context is maintained

- How fallback handling works

Text-to-Speech (TTS)

TTS converts AI-generated responses into natural speech.

QA should validate:

- Voice clarity

- Pronunciation

- Natural tone

- Emotional consistency

API and Backend Integrations

Voice AI applications often integrate with:

- CRMs

- Databases

- Payment systems

- Enterprise applications

Integration testing becomes critical to ensure smooth workflows.

The Trusys.ai Approach to Voice AI Testing

Trusys.ai focuses on intelligent quality engineering designed for AI-driven applications. Their approach emphasizes:

- Automation-first testing

- AI performance monitoring

- Enterprise scalability

- Human-centered conversational validation

Instead of treating Voice AI as standard software, modern QA frameworks recognize that conversational systems behave dynamically and continuously learn from user interactions.

Functional Testing for Conversational Accuracy

Functional testing validates whether the Voice AI behaves as expected.

Intent Recognition Testing

The AI must correctly understand user requests.

Example:

User Input

Expected Intent

“Book a flight to Chicago”

Flight Booking

“What’s my account balance?”

Account Inquiry

QA teams should test:

- Similar phrases

- Slang

- Regional wording

- Ambiguous requests

Entity Extraction Validation

Entities include:

- Dates

- Locations

- Names

- Product IDs

Example:

“Schedule a meeting tomorrow at 3 PM.”

Entities:

- Date = Tomorrow

- Time = 3 PM

Multi-Turn Dialogue Testing

Voice AI systems should maintain conversational context.

Example:

User: “Book a hotel in Boston.”

AI: “What dates?”

User: “This weekend.”

The AI should remember the original request.

Context Awareness

Test whether the AI:

- Retains memory

- Handles follow-up questions

- Supports interruptions

- Recovers gracefully

Speech Recognition and Audio Validation

Speech recognition testing is one of the most critical areas in evaluating Voice AI applications.

Accent Diversity Testing

Users speak differently across regions.

QA teams should test:

- American accents

- British accents

- Indian accents

- Australian accents

- Non-native speakers

Noise Resilience Testing

Real-world environments are noisy.

Test scenarios should include:

- Traffic sounds

- Office chatter

- Wind noise

- Echo environments

Pronunciation Testing

Users pronounce words differently.

Examples:

- “Data”

- “Route”

- Brand names

- Industry jargon

Multilingual Support

If the Voice AI supports multiple languages, test:

- Translation accuracy

- Context switching

- Regional dialects

Conversational Experience Testing

A Voice AI application should feel natural and intuitive.

Human-Like Interaction Quality

QA teams should evaluate:

- Response tone

- Conversational pacing

- Natural pauses

- Personality consistency

Fallback Response Testing

When AI doesn’t understand a request, fallback handling matters.

Poor fallback:

“I didn’t understand.”

Better fallback:

“I’m sorry, could you rephrase that question?”

Error Handling Validation

Test how the AI handles:

- Invalid input

- Silence

- Interruptions

- Unexpected responses

Conversation Continuity

Users may switch topics during conversations.

The AI should adapt smoothly without losing context.

Performance Engineering for Voice AI

Performance issues can destroy user trust instantly.

Latency Testing

Voice AI systems must respond quickly.

Recommended benchmarks:

- Under 300ms for speech recognition

- Under 2 seconds for complete response

Scalability Validation

Can the system handle thousands of users simultaneously?

QA teams should simulate:

- Peak traffic

- Concurrent sessions

- Heavy conversational loads

Concurrent User Simulation

Use load-testing platforms to evaluate:

- CPU utilization

- Memory usage

- API bottlenecks

Real-Time Performance Monitoring

Continuous monitoring helps identify:

- Delays

- Failure spikes

- Audio processing bottlenecks

Security and Compliance Validation

Voice AI applications often process sensitive information.

Security testing is non-negotiable.

Data Encryption

Test encryption for:

- Voice recordings

- API communication

- User credentials

Voice Biometric Protection

If voice authentication is used, QA teams should validate:

- Spoofing resistance

- Replay attack prevention

- Authentication accuracy

GDPR and Compliance Testing

Ensure compliance with:

- GDPR

- HIPAA

- CCPA

- PCI DSS

Authentication Workflows

Test:

- Secure login

- Multi-factor authentication

- Session handling

Accessibility and User Experience

Voice AI should work for everyone.

Inclusive Design Testing

Ensure accessibility for:

- Elderly users

- Visually impaired users

- Users with speech challenges

Voice Clarity Evaluation

Test speech output for:

- Pronunciation clarity

- Volume balance

- Natural rhythm

Accessibility Standards

QA teams should align with standards such as:

- WCAG accessibility guidelines

- Voice interaction usability standards

Customer Satisfaction Metrics

Measure:

- User engagement

- Session completion

- Customer frustration signals

Key Metrics QA Teams Should Measure

Metrics help quantify Voice AI quality.

1. Word Error Rate (WER)

Measures speech recognition accuracy.

Formula:

WER = (Substitutions + Insertions + Deletions) / Total Words

Lower WER means better recognition accuracy.

2. Intent Accuracy

Measures how often the AI correctly identifies user intent.

Target benchmark:

- 90%+ for enterprise systems

3. Task Completion Rate

Tracks whether users successfully complete tasks.

Examples:

- Booking appointments

- Processing payments

- Resolving support requests

4. Response Time

Measures AI responsiveness.

Slow responses lead to poor user experiences.

5. Customer Satisfaction Score (CSAT)

Gather feedback through:

- Surveys

- Ratings

- Conversation reviews

Recommended Tools for Voice AI Testing

Here are some powerful tools QA teams can use.

Botium

Excellent for:

- Conversational testing

- Automated chatbot validation

- NLP testing

Cyara

Widely used for:

- Voice testing

- Contact center AI validation

- IVR testing

Google Dialogflow Simulator

Useful for:

- Intent testing

- NLP debugging

- Conversation simulation

Amazon Lex Testing Tools

Helpful for:

- AWS voice bot testing

- Conversational flow validation

Selenium Integrations

Supports:

- Backend automation

- Workflow validation

- Integrated testing

Load Testing Platforms

Use tools like:

- JMeter

- LoadRunner

- k6

For scalability testing.

Common Challenges in Voice AI Testing

Voice AI introduces unique QA complexities.

Diverse Accents and Dialects

Speech recognition struggles with regional pronunciation differences.

Emotional Speech

Angry, excited, or stressed users may speak unpredictably.

Ambiguous User Intent

Users often phrase requests unclearly.

Example:

“I need help with my account.”

Which account issue exactly?

Dataset Bias

Poor training data leads to biased AI responses.

Background Noise Interference

Real-world environments remain difficult for speech engines.

Best Practices for Enterprise QA Teams

Successful Voice AI testing requires a strategic approach.

1. Implement Continuous AI Testing

AI systems evolve continuously.

QA should include:

- Regression testing

- Continuous monitoring

- Automated validation

2. Use Real-World Conversational Data

Synthetic testing alone isn’t enough.

Include:

- Actual user conversations

- Diverse demographics

- Real environments

3. Adopt Automation-First QA

Automation improves:

- Speed

- Coverage

- Consistency

This aligns closely with Trusys.ai’s intelligent automation philosophy.

4. Perform Cross-Platform Testing

Test Voice AI across:

- Smartphones

- Smart speakers

- Desktop apps

- Automotive systems

5. Maintain Human-in-the-Loop Validation

Human reviewers remain essential for:

- Emotional context

- Conversational nuance

- Edge-case analysis

Future of Voice AI Quality Engineering

Voice AI testing is evolving rapidly.

Generative AI Voice Systems

AI-generated conversations are becoming more dynamic and personalized.

Emotion-Aware AI Testing

Future systems will detect:

- Tone

- Sentiment

- Emotional state

Autonomous QA Automation

AI-driven testing platforms will automatically generate test cases.

Multilingual Conversational AI

Global businesses require:

- Real-time translation

- Language adaptation

- Cultural context awareness

FAQs

1. What is Voice AI testing?

Voice AI testing evaluates how accurately and effectively a conversational AI system understands speech, processes intent, and responds to users.

2. Why is QA important for Voice AI applications?

QA ensures Voice AI systems remain accurate, secure, scalable, and user-friendly while reducing business risks and customer frustration.

3. What is Word Error Rate (WER)?

WER measures speech recognition accuracy by calculating errors in transcribed speech compared to the original spoken words.

4. Which tools are commonly used for Voice AI testing?

Popular tools include Botium, Cyara, Dialogflow simulator, Amazon Lex tools, Selenium, and performance testing platforms like JMeter.

5. How can enterprises improve Voice AI quality assurance?

Enterprises can improve QA by using automation, real-world datasets, continuous testing strategies, and scalable AI testing frameworks.

Final Thoughts

As Voice AI adoption accelerates, enterprises can no longer rely on traditional QA methods alone. Evaluating conversational AI systems requires a specialized framework that combines functional testing, speech validation, performance engineering, security testing, and human-centered usability evaluation.

Organizations that invest in scalable Voice AI Quality Assurance gain a major competitive advantage by delivering more reliable, intelligent, and engaging user experiences.

With expertise in AI quality engineering, intelligent automation, and enterprise-scale testing strategies, Trusys.ai helps organizations build Voice AI systems that are not only innovative but also dependable and production-ready.

Stop guessing.

Start measuring.

Join teams building reliable AI with TruEval. Start with a free trial, no credit card required. Get your first evaluation running in under 10 minutes.

Questions about Trusys?

Our team is here to help. Schedule a personalized demo to see how Trusys fits your specific use case.

Book a Demo

Ready to dive in?

Check out our documentation and tutorials. Get started with example datasets and evaluation templates.

Start Free Trial

Free Trial

No credit card required

10 Min

To first evaluation

24/7

Enterprise support

Benefits

Specifications

How-to

Contact Us

Learn More

How to Evaluate a Voice AI Application: The Ultimate QA Framework for Modern Enterprises

2026-05-09

How to Evaluate a Voice AI Application: A Framework for QA Teams

Voice AI applications are everywhere these days — from virtual assistants and AI-powered contact centers to healthcare voice bots and smart enterprise systems. Businesses are rapidly adopting conversational AI to improve customer engagement, automate workflows, and deliver faster support.

But here’s the thing: a Voice AI application is only as good as its ability to understand, respond, and adapt to real human conversations.

That’s why QA teams play a critical role in Voice AI success.

Traditional software testing alone isn’t enough anymore. Testing a Voice AI application involves evaluating speech recognition accuracy, natural language understanding, conversational context, scalability, security, and user experience — all at once.

For organizations embracing AI-driven digital transformation, companies like Trusys.ai are helping enterprises implement scalable QA strategies that ensure conversational AI systems remain accurate, reliable, and enterprise-ready.

In this guide, we’ll break down a practical Voice AI testing framework that QA teams can use to evaluate modern conversational AI systems effectively.

Why Voice AI Quality Assurance Matters

Voice AI systems interact directly with users in real time. Even small issues can quickly impact customer trust and business performance.

Imagine this scenario:

- A banking assistant misunderstands an account request

- A healthcare bot fails to recognize medical terminology

- A customer support AI loops endlessly without resolving the issue

Frustrating, right?

That’s why Voice AI Quality Assurance is becoming a top priority for enterprises.

The Growing Adoption of Voice AI

According to Gartner, conversational AI adoption continues to rise across industries such as:

- Banking

- Healthcare

- Retail

- Telecom

- Logistics

- Insurance

Voice interfaces are now central to customer experience strategies.

Risks of Poor Voice AI Performance

Without proper testing, organizations may face:

- High customer frustration

- Increased support costs

- Incorrect responses

- Security vulnerabilities

- Brand reputation damage

- Compliance issues

Why Enterprises Need Scalable QA

Modern Voice AI systems constantly evolve through machine learning updates and new conversational data. QA teams need testing frameworks that support:

- Continuous validation

- Real-time monitoring

- Automated regression testing

- Scalable performance testing

This is where intelligent QA engineering approaches, like those championed by Trusys.ai, become essential.

Understanding the Architecture of Voice AI Applications

Before testing begins, QA teams must understand the core building blocks of Voice AI systems.

Speech-to-Text (STT)

STT converts spoken language into text.

QA teams must evaluate:

- Accent recognition

- Pronunciation handling

- Noise resilience

- Speech accuracy

Natural Language Processing (NLP)

NLP helps the AI understand user intent and context.

Testing focuses on:

- Intent recognition

- Entity extraction

- Context interpretation

- Sentiment understanding

Dialogue Orchestration

This controls the conversation flow.

It determines:

- What response the AI gives

- How context is maintained

- How fallback handling works

Text-to-Speech (TTS)

TTS converts AI-generated responses into natural speech.

QA should validate:

- Voice clarity

- Pronunciation

- Natural tone

- Emotional consistency

API and Backend Integrations

Voice AI applications often integrate with:

- CRMs

- Databases

- Payment systems

- Enterprise applications

Integration testing becomes critical to ensure smooth workflows.

The Trusys.ai Approach to Voice AI Testing

Trusys.ai focuses on intelligent quality engineering designed for AI-driven applications. Their approach emphasizes:

- Automation-first testing

- AI performance monitoring

- Enterprise scalability

- Human-centered conversational validation

Instead of treating Voice AI as standard software, modern QA frameworks recognize that conversational systems behave dynamically and continuously learn from user interactions.

Functional Testing for Conversational Accuracy

Functional testing validates whether the Voice AI behaves as expected.

Intent Recognition Testing

The AI must correctly understand user requests.

Example:

User Input

Expected Intent

“Book a flight to Chicago”

Flight Booking

“What’s my account balance?”

Account Inquiry

QA teams should test:

- Similar phrases

- Slang

- Regional wording

- Ambiguous requests

Entity Extraction Validation

Entities include:

- Dates

- Locations

- Names

- Product IDs

Example:

“Schedule a meeting tomorrow at 3 PM.”

Entities:

- Date = Tomorrow

- Time = 3 PM

Multi-Turn Dialogue Testing

Voice AI systems should maintain conversational context.

Example:

User: “Book a hotel in Boston.”

AI: “What dates?”

User: “This weekend.”

The AI should remember the original request.

Context Awareness

Test whether the AI:

- Retains memory

- Handles follow-up questions

- Supports interruptions

- Recovers gracefully

Speech Recognition and Audio Validation

Speech recognition testing is one of the most critical areas in evaluating Voice AI applications.

Accent Diversity Testing

Users speak differently across regions.

QA teams should test:

- American accents

- British accents

- Indian accents

- Australian accents

- Non-native speakers

Noise Resilience Testing

Real-world environments are noisy.

Test scenarios should include:

- Traffic sounds

- Office chatter

- Wind noise

- Echo environments

Pronunciation Testing

Users pronounce words differently.

Examples:

- “Data”

- “Route”

- Brand names

- Industry jargon

Multilingual Support

If the Voice AI supports multiple languages, test:

- Translation accuracy

- Context switching

- Regional dialects

Conversational Experience Testing

A Voice AI application should feel natural and intuitive.

Human-Like Interaction Quality

QA teams should evaluate:

- Response tone

- Conversational pacing

- Natural pauses

- Personality consistency

Fallback Response Testing

When AI doesn’t understand a request, fallback handling matters.

Poor fallback:

“I didn’t understand.”

Better fallback:

“I’m sorry, could you rephrase that question?”

Error Handling Validation

Test how the AI handles:

- Invalid input

- Silence

- Interruptions

- Unexpected responses

Conversation Continuity

Users may switch topics during conversations.

The AI should adapt smoothly without losing context.

Performance Engineering for Voice AI

Performance issues can destroy user trust instantly.

Latency Testing

Voice AI systems must respond quickly.

Recommended benchmarks:

- Under 300ms for speech recognition

- Under 2 seconds for complete response

Scalability Validation

Can the system handle thousands of users simultaneously?

QA teams should simulate:

- Peak traffic

- Concurrent sessions

- Heavy conversational loads

Concurrent User Simulation

Use load-testing platforms to evaluate:

- CPU utilization

- Memory usage

- API bottlenecks

Real-Time Performance Monitoring

Continuous monitoring helps identify:

- Delays

- Failure spikes

- Audio processing bottlenecks

Security and Compliance Validation

Voice AI applications often process sensitive information.

Security testing is non-negotiable.

Data Encryption

Test encryption for:

- Voice recordings

- API communication

- User credentials

Voice Biometric Protection

If voice authentication is used, QA teams should validate:

- Spoofing resistance

- Replay attack prevention

- Authentication accuracy

GDPR and Compliance Testing

Ensure compliance with:

- GDPR

- HIPAA

- CCPA

- PCI DSS

Authentication Workflows

Test:

- Secure login

- Multi-factor authentication

- Session handling

Accessibility and User Experience

Voice AI should work for everyone.

Inclusive Design Testing

Ensure accessibility for:

- Elderly users

- Visually impaired users

- Users with speech challenges

Voice Clarity Evaluation

Test speech output for:

- Pronunciation clarity

- Volume balance

- Natural rhythm

Accessibility Standards

QA teams should align with standards such as:

- WCAG accessibility guidelines

- Voice interaction usability standards

Customer Satisfaction Metrics

Measure:

- User engagement

- Session completion

- Customer frustration signals

Key Metrics QA Teams Should Measure

Metrics help quantify Voice AI quality.

1. Word Error Rate (WER)

Measures speech recognition accuracy.

Formula:

WER = (Substitutions + Insertions + Deletions) / Total Words

Lower WER means better recognition accuracy.

2. Intent Accuracy

Measures how often the AI correctly identifies user intent.

Target benchmark:

- 90%+ for enterprise systems

3. Task Completion Rate

Tracks whether users successfully complete tasks.

Examples:

- Booking appointments

- Processing payments

- Resolving support requests

4. Response Time

Measures AI responsiveness.

Slow responses lead to poor user experiences.

5. Customer Satisfaction Score (CSAT)

Gather feedback through:

- Surveys

- Ratings

- Conversation reviews

Recommended Tools for Voice AI Testing

Here are some powerful tools QA teams can use.

Botium

Excellent for:

- Conversational testing

- Automated chatbot validation

- NLP testing

Cyara

Widely used for:

- Voice testing

- Contact center AI validation

- IVR testing

Google Dialogflow Simulator

Useful for:

- Intent testing

- NLP debugging

- Conversation simulation

Amazon Lex Testing Tools

Helpful for:

- AWS voice bot testing

- Conversational flow validation

Selenium Integrations

Supports:

- Backend automation

- Workflow validation

- Integrated testing

Load Testing Platforms

Use tools like:

- JMeter

- LoadRunner

- k6

For scalability testing.

Common Challenges in Voice AI Testing

Voice AI introduces unique QA complexities.

Diverse Accents and Dialects

Speech recognition struggles with regional pronunciation differences.

Emotional Speech

Angry, excited, or stressed users may speak unpredictably.

Ambiguous User Intent

Users often phrase requests unclearly.

Example:

“I need help with my account.”

Which account issue exactly?

Dataset Bias

Poor training data leads to biased AI responses.

Background Noise Interference

Real-world environments remain difficult for speech engines.

Best Practices for Enterprise QA Teams

Successful Voice AI testing requires a strategic approach.

1. Implement Continuous AI Testing

AI systems evolve continuously.

QA should include:

- Regression testing

- Continuous monitoring

- Automated validation

2. Use Real-World Conversational Data

Synthetic testing alone isn’t enough.

Include:

- Actual user conversations

- Diverse demographics

- Real environments

3. Adopt Automation-First QA

Automation improves:

- Speed

- Coverage

- Consistency

This aligns closely with Trusys.ai’s intelligent automation philosophy.

4. Perform Cross-Platform Testing

Test Voice AI across:

- Smartphones

- Smart speakers

- Desktop apps

- Automotive systems

5. Maintain Human-in-the-Loop Validation

Human reviewers remain essential for:

- Emotional context

- Conversational nuance

- Edge-case analysis

Future of Voice AI Quality Engineering

Voice AI testing is evolving rapidly.

Generative AI Voice Systems

AI-generated conversations are becoming more dynamic and personalized.

Emotion-Aware AI Testing

Future systems will detect:

- Tone

- Sentiment

- Emotional state

Autonomous QA Automation

AI-driven testing platforms will automatically generate test cases.

Multilingual Conversational AI

Global businesses require:

- Real-time translation

- Language adaptation

- Cultural context awareness

FAQs

1. What is Voice AI testing?

Voice AI testing evaluates how accurately and effectively a conversational AI system understands speech, processes intent, and responds to users.

2. Why is QA important for Voice AI applications?

QA ensures Voice AI systems remain accurate, secure, scalable, and user-friendly while reducing business risks and customer frustration.

3. What is Word Error Rate (WER)?

WER measures speech recognition accuracy by calculating errors in transcribed speech compared to the original spoken words.

4. Which tools are commonly used for Voice AI testing?

Popular tools include Botium, Cyara, Dialogflow simulator, Amazon Lex tools, Selenium, and performance testing platforms like JMeter.

5. How can enterprises improve Voice AI quality assurance?

Enterprises can improve QA by using automation, real-world datasets, continuous testing strategies, and scalable AI testing frameworks.

Final Thoughts

As Voice AI adoption accelerates, enterprises can no longer rely on traditional QA methods alone. Evaluating conversational AI systems requires a specialized framework that combines functional testing, speech validation, performance engineering, security testing, and human-centered usability evaluation.

Organizations that invest in scalable Voice AI Quality Assurance gain a major competitive advantage by delivering more reliable, intelligent, and engaging user experiences.

With expertise in AI quality engineering, intelligent automation, and enterprise-scale testing strategies, Trusys.ai helps organizations build Voice AI systems that are not only innovative but also dependable and production-ready.

Stop guessing.

Start measuring.

Join teams building reliable AI with TruEval. Start with a free trial, no credit card required. Get your first evaluation running in under 10 minutes.

Questions about Trusys?

Our team is here to help. Schedule a personalized demo to see how Trusys fits your specific use case.

Book a Demo

Ready to dive in?

Check out our documentation and tutorials. Get started with example datasets and evaluation templates.

Start Free Trial

Free Trial

No credit card required

10 Min

To first evaluation

24/7

Enterprise support

How to Evaluate a Voice AI Application: The Ultimate QA Framework for Modern Enterprises

2026-05-09

How to Evaluate a Voice AI Application: A Framework for QA Teams

Voice AI applications are everywhere these days — from virtual assistants and AI-powered contact centers to healthcare voice bots and smart enterprise systems. Businesses are rapidly adopting conversational AI to improve customer engagement, automate workflows, and deliver faster support.

But here’s the thing: a Voice AI application is only as good as its ability to understand, respond, and adapt to real human conversations.

That’s why QA teams play a critical role in Voice AI success.

Traditional software testing alone isn’t enough anymore. Testing a Voice AI application involves evaluating speech recognition accuracy, natural language understanding, conversational context, scalability, security, and user experience — all at once.

For organizations embracing AI-driven digital transformation, companies like Trusys.ai are helping enterprises implement scalable QA strategies that ensure conversational AI systems remain accurate, reliable, and enterprise-ready.

In this guide, we’ll break down a practical Voice AI testing framework that QA teams can use to evaluate modern conversational AI systems effectively.

Why Voice AI Quality Assurance Matters

Voice AI systems interact directly with users in real time. Even small issues can quickly impact customer trust and business performance.

Imagine this scenario:

- A banking assistant misunderstands an account request

- A healthcare bot fails to recognize medical terminology

- A customer support AI loops endlessly without resolving the issue

Frustrating, right?

That’s why Voice AI Quality Assurance is becoming a top priority for enterprises.

The Growing Adoption of Voice AI

According to Gartner, conversational AI adoption continues to rise across industries such as:

- Banking

- Healthcare

- Retail

- Telecom

- Logistics

- Insurance

Voice interfaces are now central to customer experience strategies.

Risks of Poor Voice AI Performance

Without proper testing, organizations may face:

- High customer frustration

- Increased support costs

- Incorrect responses

- Security vulnerabilities

- Brand reputation damage

- Compliance issues

Why Enterprises Need Scalable QA

Modern Voice AI systems constantly evolve through machine learning updates and new conversational data. QA teams need testing frameworks that support:

- Continuous validation

- Real-time monitoring

- Automated regression testing

- Scalable performance testing

This is where intelligent QA engineering approaches, like those championed by Trusys.ai, become essential.

Understanding the Architecture of Voice AI Applications

Before testing begins, QA teams must understand the core building blocks of Voice AI systems.

Speech-to-Text (STT)

STT converts spoken language into text.

QA teams must evaluate:

- Accent recognition

- Pronunciation handling

- Noise resilience

- Speech accuracy

Natural Language Processing (NLP)

NLP helps the AI understand user intent and context.

Testing focuses on:

- Intent recognition

- Entity extraction

- Context interpretation

- Sentiment understanding

Dialogue Orchestration

This controls the conversation flow.

It determines:

- What response the AI gives

- How context is maintained

- How fallback handling works

Text-to-Speech (TTS)

TTS converts AI-generated responses into natural speech.

QA should validate:

- Voice clarity

- Pronunciation

- Natural tone

- Emotional consistency

API and Backend Integrations

Voice AI applications often integrate with:

- CRMs

- Databases

- Payment systems

- Enterprise applications

Integration testing becomes critical to ensure smooth workflows.

The Trusys.ai Approach to Voice AI Testing

Trusys.ai focuses on intelligent quality engineering designed for AI-driven applications. Their approach emphasizes:

- Automation-first testing

- AI performance monitoring

- Enterprise scalability

- Human-centered conversational validation

Instead of treating Voice AI as standard software, modern QA frameworks recognize that conversational systems behave dynamically and continuously learn from user interactions.

Functional Testing for Conversational Accuracy

Functional testing validates whether the Voice AI behaves as expected.

Intent Recognition Testing

The AI must correctly understand user requests.

Example:

User Input

Expected Intent

“Book a flight to Chicago”

Flight Booking

“What’s my account balance?”

Account Inquiry

QA teams should test:

- Similar phrases

- Slang

- Regional wording

- Ambiguous requests

Entity Extraction Validation

Entities include:

- Dates

- Locations

- Names

- Product IDs

Example:

“Schedule a meeting tomorrow at 3 PM.”

Entities:

- Date = Tomorrow

- Time = 3 PM

Multi-Turn Dialogue Testing

Voice AI systems should maintain conversational context.

Example:

User: “Book a hotel in Boston.”

AI: “What dates?”

User: “This weekend.”

The AI should remember the original request.

Context Awareness

Test whether the AI:

- Retains memory

- Handles follow-up questions

- Supports interruptions

- Recovers gracefully

Speech Recognition and Audio Validation

Speech recognition testing is one of the most critical areas in evaluating Voice AI applications.

Accent Diversity Testing

Users speak differently across regions.

QA teams should test:

- American accents

- British accents

- Indian accents

- Australian accents

- Non-native speakers

Noise Resilience Testing

Real-world environments are noisy.

Test scenarios should include:

- Traffic sounds

- Office chatter

- Wind noise

- Echo environments

Pronunciation Testing

Users pronounce words differently.

Examples:

- “Data”

- “Route”

- Brand names

- Industry jargon

Multilingual Support

If the Voice AI supports multiple languages, test:

- Translation accuracy

- Context switching

- Regional dialects

Conversational Experience Testing

A Voice AI application should feel natural and intuitive.

Human-Like Interaction Quality

QA teams should evaluate:

- Response tone

- Conversational pacing

- Natural pauses

- Personality consistency

Fallback Response Testing

When AI doesn’t understand a request, fallback handling matters.

Poor fallback:

“I didn’t understand.”

Better fallback:

“I’m sorry, could you rephrase that question?”

Error Handling Validation

Test how the AI handles:

- Invalid input

- Silence

- Interruptions

- Unexpected responses

Conversation Continuity

Users may switch topics during conversations.

The AI should adapt smoothly without losing context.

Performance Engineering for Voice AI

Performance issues can destroy user trust instantly.

Latency Testing

Voice AI systems must respond quickly.

Recommended benchmarks:

- Under 300ms for speech recognition

- Under 2 seconds for complete response

Scalability Validation

Can the system handle thousands of users simultaneously?

QA teams should simulate:

- Peak traffic

- Concurrent sessions

- Heavy conversational loads

Concurrent User Simulation

Use load-testing platforms to evaluate:

- CPU utilization

- Memory usage

- API bottlenecks

Real-Time Performance Monitoring

Continuous monitoring helps identify:

- Delays

- Failure spikes

- Audio processing bottlenecks

Security and Compliance Validation

Voice AI applications often process sensitive information.

Security testing is non-negotiable.

Data Encryption

Test encryption for:

- Voice recordings

- API communication

- User credentials

Voice Biometric Protection

If voice authentication is used, QA teams should validate:

- Spoofing resistance

- Replay attack prevention

- Authentication accuracy

GDPR and Compliance Testing

Ensure compliance with:

- GDPR

- HIPAA

- CCPA

- PCI DSS

Authentication Workflows

Test:

- Secure login

- Multi-factor authentication

- Session handling

Accessibility and User Experience

Voice AI should work for everyone.

Inclusive Design Testing

Ensure accessibility for:

- Elderly users

- Visually impaired users

- Users with speech challenges

Voice Clarity Evaluation

Test speech output for:

- Pronunciation clarity

- Volume balance

- Natural rhythm

Accessibility Standards

QA teams should align with standards such as:

- WCAG accessibility guidelines

- Voice interaction usability standards

Customer Satisfaction Metrics

Measure:

- User engagement

- Session completion

- Customer frustration signals

Key Metrics QA Teams Should Measure

Metrics help quantify Voice AI quality.

1. Word Error Rate (WER)

Measures speech recognition accuracy.

Formula:

WER = (Substitutions + Insertions + Deletions) / Total Words

Lower WER means better recognition accuracy.

2. Intent Accuracy

Measures how often the AI correctly identifies user intent.

Target benchmark:

- 90%+ for enterprise systems

3. Task Completion Rate

Tracks whether users successfully complete tasks.

Examples:

- Booking appointments

- Processing payments

- Resolving support requests

4. Response Time

Measures AI responsiveness.

Slow responses lead to poor user experiences.

5. Customer Satisfaction Score (CSAT)

Gather feedback through:

- Surveys

- Ratings

- Conversation reviews

Recommended Tools for Voice AI Testing

Here are some powerful tools QA teams can use.

Botium

Excellent for:

- Conversational testing

- Automated chatbot validation

- NLP testing

Cyara

Widely used for:

- Voice testing

- Contact center AI validation

- IVR testing

Google Dialogflow Simulator

Useful for:

- Intent testing

- NLP debugging

- Conversation simulation

Amazon Lex Testing Tools

Helpful for:

- AWS voice bot testing

- Conversational flow validation

Selenium Integrations

Supports:

- Backend automation

- Workflow validation

- Integrated testing

Load Testing Platforms

Use tools like:

- JMeter

- LoadRunner

- k6

For scalability testing.

Common Challenges in Voice AI Testing

Voice AI introduces unique QA complexities.

Diverse Accents and Dialects

Speech recognition struggles with regional pronunciation differences.

Emotional Speech

Angry, excited, or stressed users may speak unpredictably.

Ambiguous User Intent

Users often phrase requests unclearly.

Example:

“I need help with my account.”

Which account issue exactly?

Dataset Bias

Poor training data leads to biased AI responses.

Background Noise Interference

Real-world environments remain difficult for speech engines.

Best Practices for Enterprise QA Teams

Successful Voice AI testing requires a strategic approach.

1. Implement Continuous AI Testing

AI systems evolve continuously.

QA should include:

- Regression testing

- Continuous monitoring

- Automated validation

2. Use Real-World Conversational Data

Synthetic testing alone isn’t enough.

Include:

- Actual user conversations

- Diverse demographics

- Real environments

3. Adopt Automation-First QA

Automation improves:

- Speed

- Coverage

- Consistency

This aligns closely with Trusys.ai’s intelligent automation philosophy.

4. Perform Cross-Platform Testing

Test Voice AI across:

- Smartphones

- Smart speakers

- Desktop apps

- Automotive systems

5. Maintain Human-in-the-Loop Validation

Human reviewers remain essential for:

- Emotional context

- Conversational nuance

- Edge-case analysis

Future of Voice AI Quality Engineering

Voice AI testing is evolving rapidly.

Generative AI Voice Systems

AI-generated conversations are becoming more dynamic and personalized.

Emotion-Aware AI Testing

Future systems will detect:

- Tone

- Sentiment

- Emotional state

Autonomous QA Automation

AI-driven testing platforms will automatically generate test cases.

Multilingual Conversational AI

Global businesses require:

- Real-time translation

- Language adaptation

- Cultural context awareness

FAQs

1. What is Voice AI testing?

Voice AI testing evaluates how accurately and effectively a conversational AI system understands speech, processes intent, and responds to users.

2. Why is QA important for Voice AI applications?

QA ensures Voice AI systems remain accurate, secure, scalable, and user-friendly while reducing business risks and customer frustration.

3. What is Word Error Rate (WER)?

WER measures speech recognition accuracy by calculating errors in transcribed speech compared to the original spoken words.

4. Which tools are commonly used for Voice AI testing?

Popular tools include Botium, Cyara, Dialogflow simulator, Amazon Lex tools, Selenium, and performance testing platforms like JMeter.

5. How can enterprises improve Voice AI quality assurance?

Enterprises can improve QA by using automation, real-world datasets, continuous testing strategies, and scalable AI testing frameworks.

Final Thoughts

As Voice AI adoption accelerates, enterprises can no longer rely on traditional QA methods alone. Evaluating conversational AI systems requires a specialized framework that combines functional testing, speech validation, performance engineering, security testing, and human-centered usability evaluation.

Organizations that invest in scalable Voice AI Quality Assurance gain a major competitive advantage by delivering more reliable, intelligent, and engaging user experiences.

With expertise in AI quality engineering, intelligent automation, and enterprise-scale testing strategies, Trusys.ai helps organizations build Voice AI systems that are not only innovative but also dependable and production-ready.

Stop guessing.

Start measuring.

Join teams building reliable AI with Trusys. Start with a free trial, no credit card required. Get your first evaluation running in under 10 minutes.

Questions about Trusys?

Our team is here to help. Schedule a personalized demo to see how Trusys fits your specific use case.

Book a Demo

Ready to dive in?

Check out our documentation and tutorials. Get started with example datasets and evaluation templates.

Start Free Trial

Free Trial

No credit card required

10 Min

to get started

24/7

Enterprise support